Organic traffic drops, the most likely causes according to Google

It is a situation we could unfortunately all find ourselves in: a (bad) morning we open Search Console and find that traffic from Google Search has decreased. And no matter how hard we try, reason, intervene and harangue, our site continues to gradually lose organic traffic. What is the reason for this sudden and slow descent? In our support comes Google, which presents a series of tips for analyzing the reasons why we may be losing organic traffic, with 5 causes that are considered the most frequent, and a guide (including graphics) that visually shows the effects of such declines and helps us analyze the negative fluctuations in organic traffic to try to respond and lift our site.

Sites in decline, causes and tips from Google

The issue was addressed in a lengthy article by Daniel Waisberg on Google’s constantly updated blog (the latest is dated Sept. 26, 2023) that analytically explains what negative traffic fluctuations look like and what they mean for a site’s volume.

In a nutshell, according to the Search Advocate, a drop in organic search traffic “can occur for several reasons and most of them can be reversed,” writes Search Advocate Daniel Waisberg in the blog post, noting that “it may not be easy to understand what exactly happened to your site”.

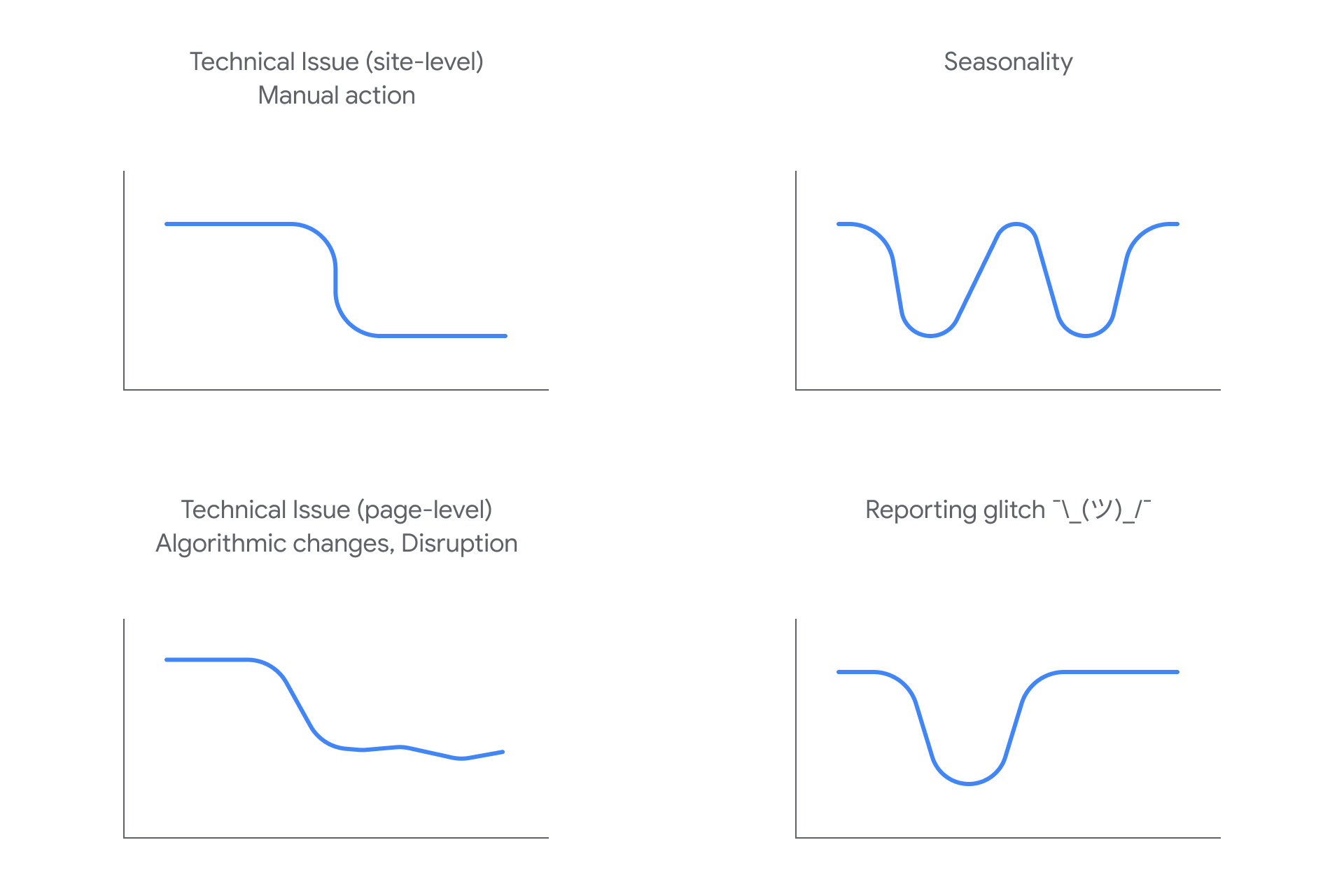

Just to help us understand what is affecting the traffic on our site, the Googler has sketched some examples of falls and the way they can potentially affect our traffic.

The shape of graphs for a site that loses traffic

For the first time, then, Google shows the real look of the various types of organic traffic drops through the screenshots of the Google Search Console performance reports, adding a number of tips to help us deal with such situations. It is interesting to notice the visual difference that exists between the various graphs, sign that every type of problematic generates characteristic and distinctive oscillations that we can recognize at a glance.

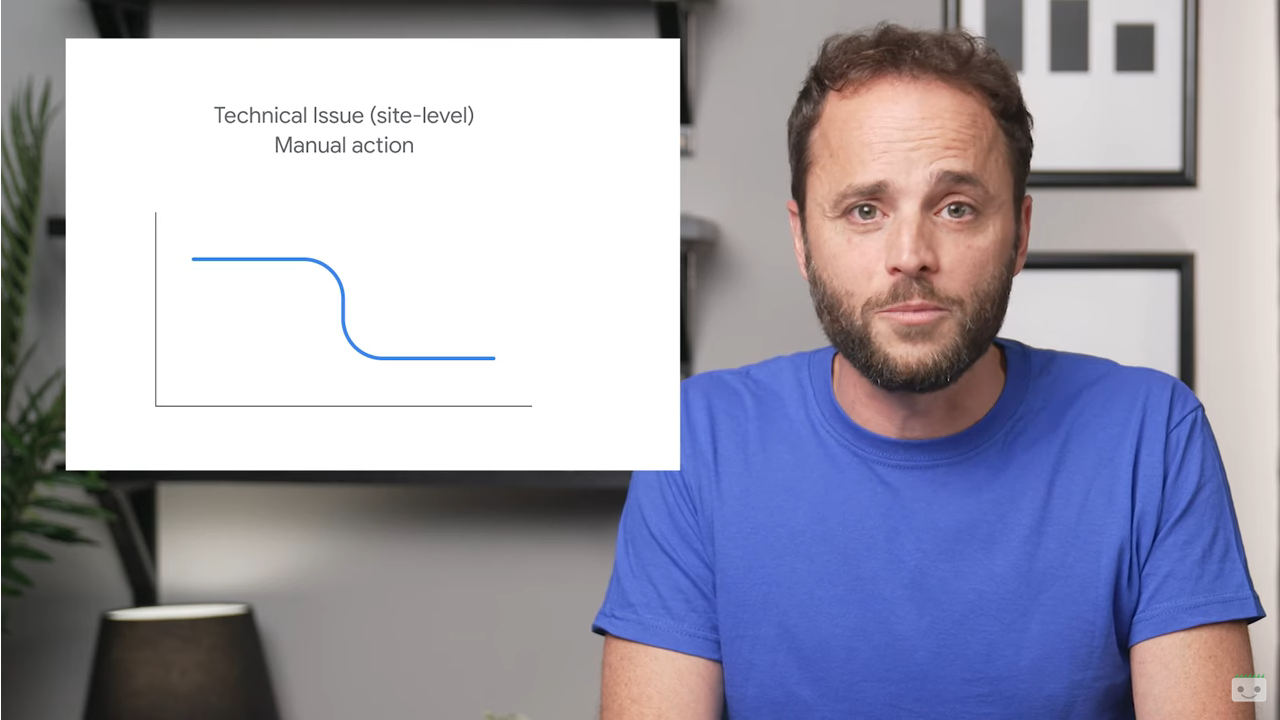

In particular, site-level technical problems and manual actions generally cause a huge and sudden drop in organic traffic (top left in the picture).

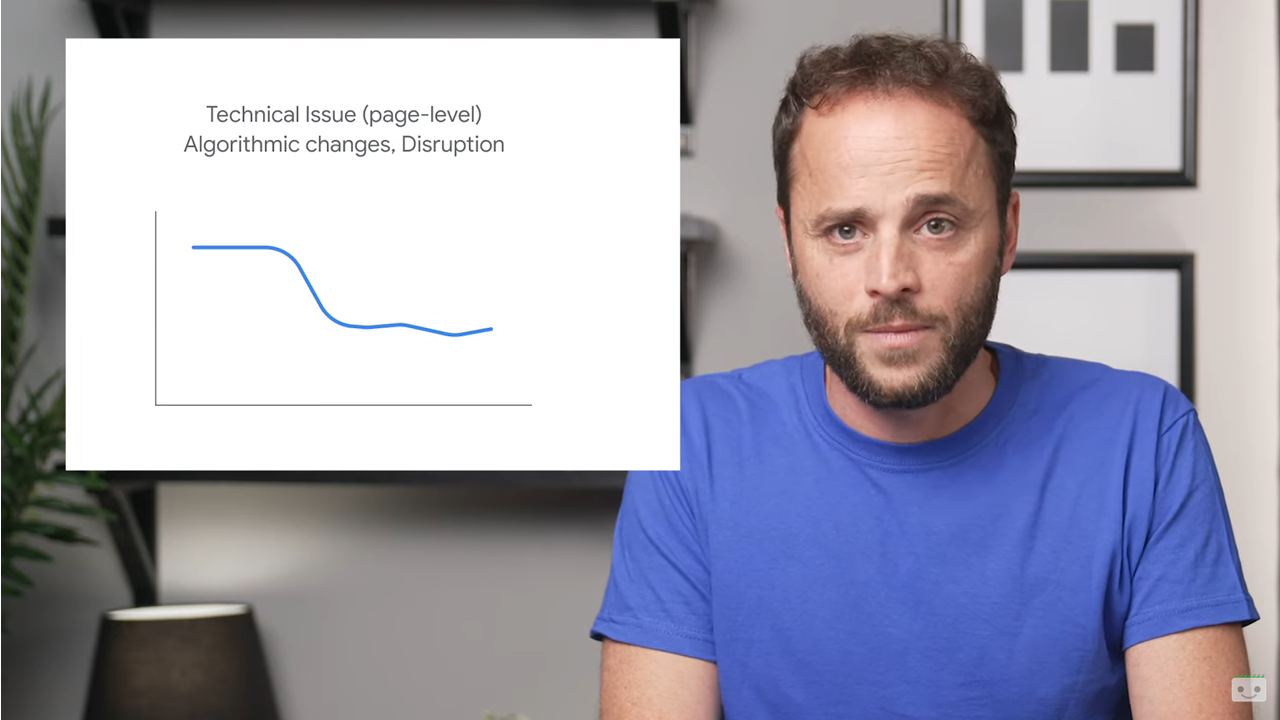

Technical issues at the page level, a change in the algorithm such as a core update or a change in the search intent cause a slower drop in traffic, which tends to stabilize over time.

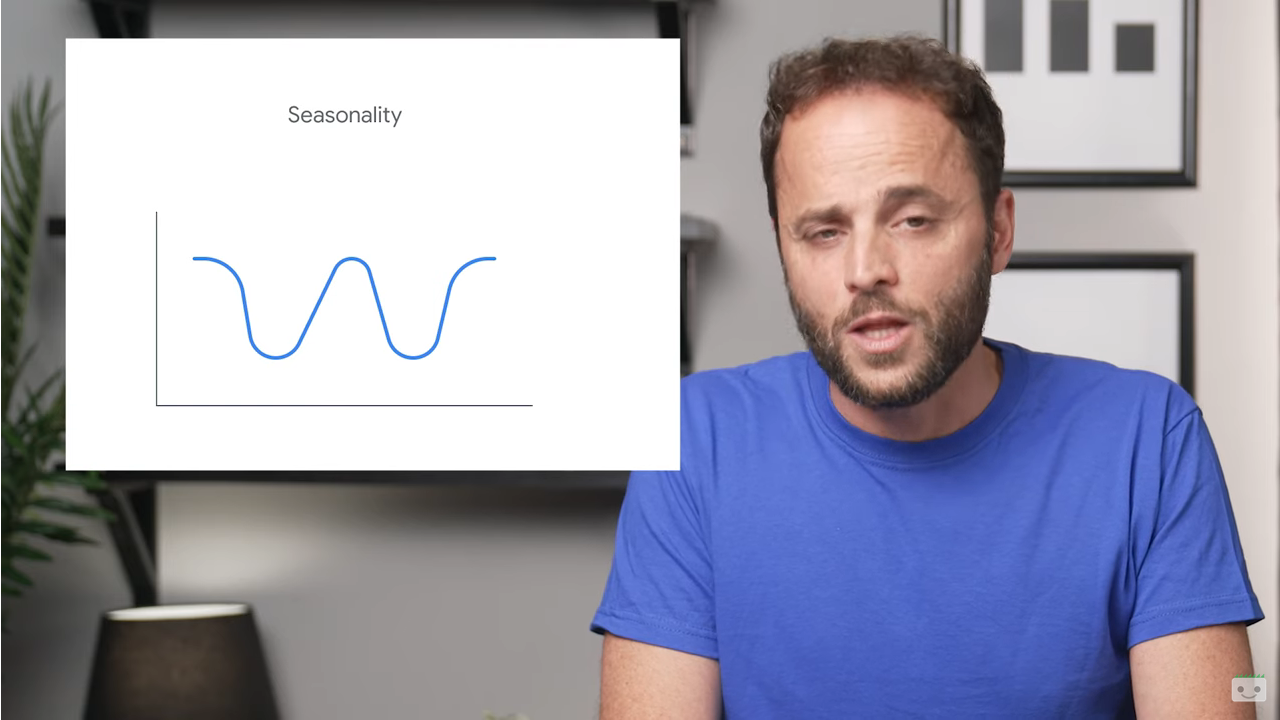

Then there are the changes related to seasonality, tendential and cyclical oscillations that cause graphs with curves characterized by ups and downs.

Finally, the last graph shows the oscillation of a site reported for technical problems in Search Console and then returned to normal after the correction.

Very trivially, recognizing exactly what is the cause of the collapse of organic traffic is a first step towards solving the problem, as we said in this study to understand the differences between the decreases linked to an algorithmic change and those for manual actions.

The five causes of SEO traffic loss

According to Waisberg, there are five main causes of a drop search traffic:

- Technical problems. Presence of errors that can prevent Google from scanning, indexing or publishing our pages for users, such as unavailability of the server, recovery of robots.txt files, page not found and more. Such problems can affect the entire site (for example, if your site is inactive) or the entire page (as for a tag noindex put badly, which causes a failure to scan the page by Google and generates a slower drop in traffic). To detect the problem, Google suggests using the crawl statistics report and page indexing report to find out if there is a corresponding spike in the detected problems.

- Security issues. When a site is affected by a security threat, Google may alert users before they reach its pages with notices or interstitial pages, which can reduce search traffic. To find out if Google has detected a security threat on the website we can use the Security Issues report.

- Manual actions. If the site does not comply with Google’s guidelines, some of the pages or the entire domain may be omitted from Google Search results by manual action. To see if this is why our site is losing organic traffic, we can check the Google Search spam rules and the Manual Actions report on Search Console, remembering that Google’s algorithms might consider violations of the rules even without manual action.

- Algorithmic changes. Google “always improves the way it evaluates content and updates its algorithm accordingly,” says Waisberg, and core updates and other minor updates can change the performance of some pages in Google Search results.The suggestion is to self-evaluate content to make sure it is useful, reliable and people-centered, always keeping in mind that “Google focuses on users” and, therefore, improving content for the audience means going in the right direction.

- Changes to the search interest. Sometimes, changes in user behavior “alter the demand for certain queries, due to a new trend or seasonality during the year”. Therefore, a site’s traffic may simply decrease due to external influences, due to changes in the search intent identified (and rewarded) by Google. To identify the queries that have experienced a drop in clicks and impressions, we can use the performance report, applying a filter to include only one query at a time (choosing the queries that receive the most traffic, the guide explains), and then check Google Trends searches to see if the drop has affected only your site or the whole web.

Then there is another specific case where the site may lose traffic, that is, when a migration has just been completed: if we change the URLs of existing pages on the site, Waisberg clarifies, we may in fact experience ranking fluctuations while Google crawls and re-indexes the domain. As a rule of thumb, a medium-sized website may take a few weeks before Google notices the change, while larger sites may take even longer (in the “hope” that we don’t run into common errors when migrating a site with URL changes).

How to diagnose a traffic drop in Google Search

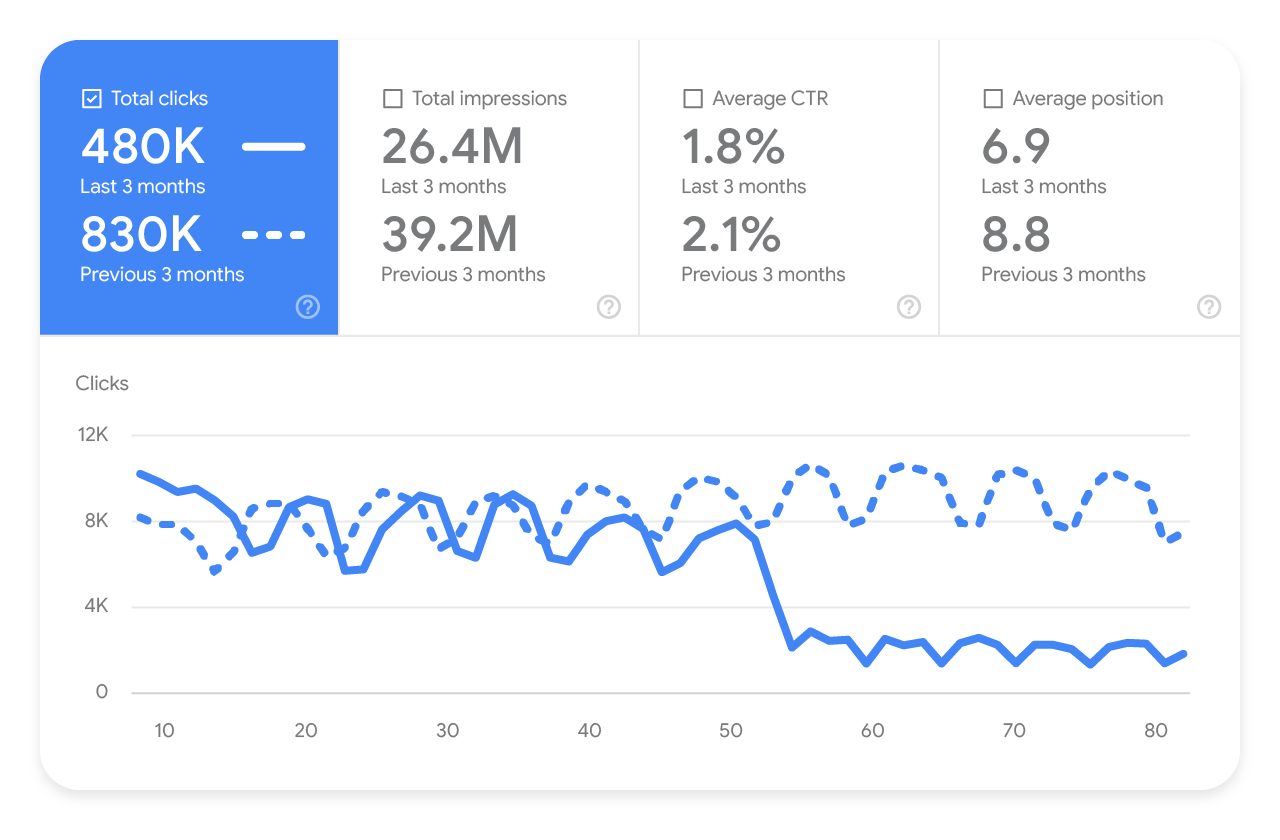

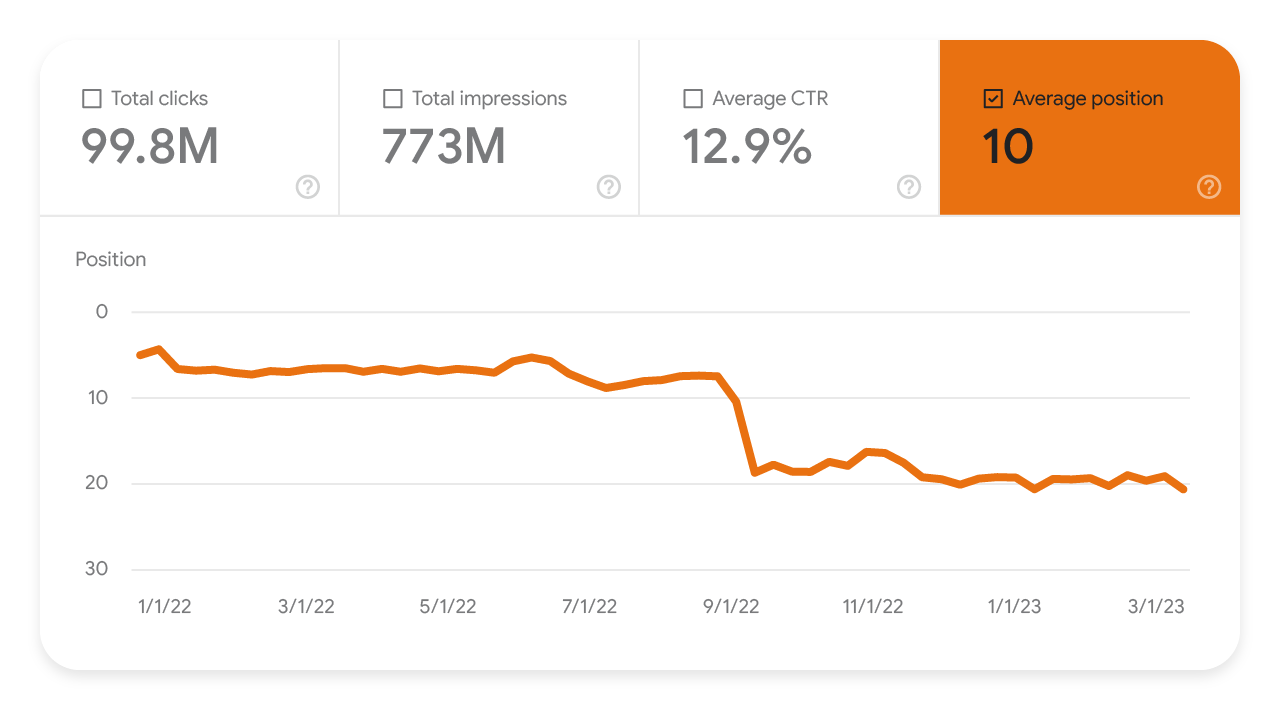

According to Waisberg, the most efficient way to understand what happened to a site’s traffic is to open its Search Console performance report and look at the main chart.

A graph “is worth a thousand words” and summarizes a lot of information, adds the expert, and also the analysis of the shape of the line will already tell us much about the potential causes of the movement.

In order to perform this analysis, the article suggests 3 indications:

- Change the date range to include the last 16 months, to analyze the traffic decline in the context and exclude that it is a seasonal decline that occurs each year on holiday holidays or a trend.

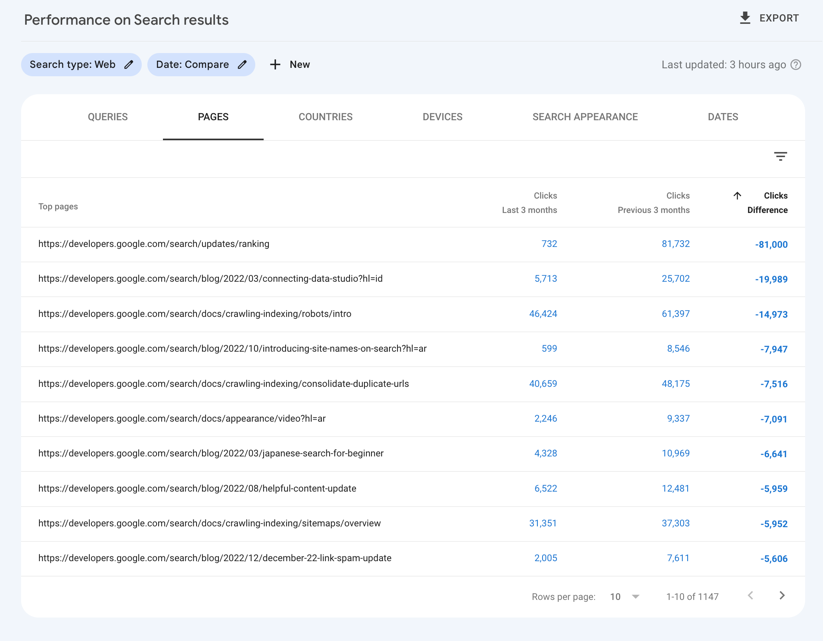

- Compare the drop period with a similar period, comparing the same number of days and preferably the same days of the week, to review exactly what has changed: click on all tabs to find out if the change was only for queries, Urls, countries, devices, or specific search aspects.

- Separately analyze the different types of search, to understand whether the decrease found occurred in the Web Search, in Google Images, in the Video tab or in the Google News tab.

- Monitor average position in search results. In general, Waisberg says, we should not focus too much on “absolute position”-impressions and clicks are ultimately the measure of a site’s success. However, if we notice a dramatic and persistent drop in position, we might begin to self-assess our content to see if it is useful and reliable.

- Look for patterns in the pages affected by the drop. The Pages table can help us to identify the possible presence of patterns that could explain the origin of the drop. For example, an important factor is to find out whether the drop occurred in the entire site, in a group of pages, or even in just one very important page of the site. We can do this analysis by comparing the drop period with a similar period and comparing the pages that lost a significant amount of clicks, select “Click Difference” to sort by the pages that lost the most traffic. If we find that it is a site-wide problem, we can further examine it with the Page Indexing report; if the drop affects only a group of pages, we will instead use the URL checking tool to analyze these resources.

Studying the context of the site

Digging deep into reports, also using Google Trends or SEO tool like ours in support, can help us understand in which category falls our traffic decline, and in particular to find out if this loss of traffic is part of a broader industry trend or if it is happening only for our pages.

There are two particular aspects to analyze regarding the context of action of our site:

- Changes in search intent or new product. If there are big changes in what and how people are looking for (we had a recent demonstration with the pandemic), people can start looking for different queries or use their devices for different purposes. Also, if our brand is a specific online e-Commerce, there may be a new competitor product that cannibalizes our search queries.

- Seasonality. As we know, there are some queries and researches that follow seasonal trends; for example, those related to food, since “people look for diets in January, turkey in November and champagne in December” (at least in the United States) and different sectors have different levels of seasonality.

Analyzing the trends of queries

Waisberg suggests using Google Trends to analyze trends in different sectors, because it provides access to a largely unfiltered sample of actual search requests made to Google; in addition, it is “anonymized, classified and aggregated”allowing Google to show interest in topics from around the world or up to city level.

With this tool, we can check the queries that are driving traffic to our site to see if they have noticeable drops at different times of the year.

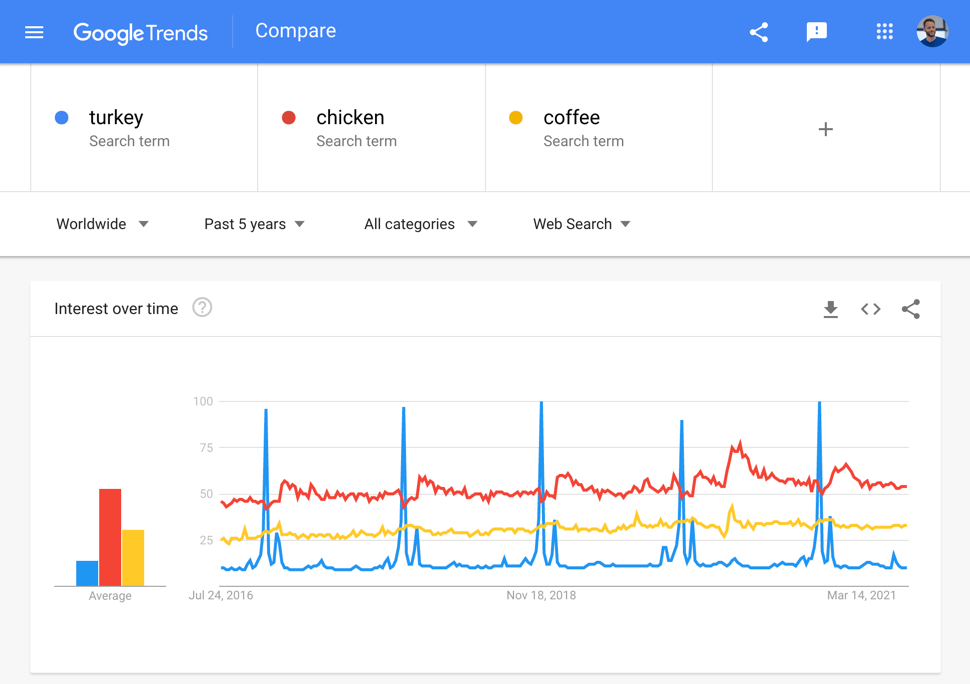

The example in the following image shows three types of trend:

- Turkey has a strong seasonality, with a peak every year in November (Thanksgiving period, of which the turkey is the symbol dish).

- Chicken shows a certain seasonality, but less marked.

- Coffee is significantly more stable and “people seem to need it all year round”.

Still within Google Trends we can discover two other interesting insights that could help us with search traffic:

- To monitor the main queries in our region and compare them with the queries from which we receive traffic, as shown in the Search Console performance report. If there are no queries in the traffic, check if the site has content on that topic and verify that they are scanned and indexed.

- To check the queries related to important topics, which could bring up increasing related queries and help us properly prepare the site, for example by adding related content.

How to analyze traffic declines with Search Console

Daniel Waisberg himself, more recently, devoted an episode of his Search Console Training series on YouTube to explaining the main reasons behind traffic declines from Google Search, specifically explaining how to analyze patterns of traffic declines to your site by using the Search Console performance report and Google Trends to examine general trends in your industry or business niche.

According to Google’s Search Advocate, becoming familiar with the main reasons behind organic traffic declines can help us move from monitoring to an exploratory approach toward finding the most likely hypothesis about what is happening, the crucial first step in trying to turn things around.

The 4 common patterns of traffic declines

Waisberg echoes the graphics and information from his article, adding some useful pointers.

- Site-level technical problems/Manual actions

Site-level technical problems are errors that can prevent Google from crawling, indexing, or presenting pages to users. An example of a site-level technical problem is a site that is not working: in this case, we would see a significant drop in traffic, not just from Google Search source.

Manual actions, on the other hand, affect a site that does not follow Google’s guidelines, affecting some pages or the entire site, which may then be less visible in Search results.

2. Page-level technical problems / Algorithmic updates / Changes in user interest

Different is the case of page-level technical problems, such as an unintentional noindex tag. Since this problem depends on Google crawling the page, it generates a slower decline in traffic than site-level problems (in fact, the curve is less steep).

Similar aspect is determined by declines due to algorithmic updates, which are the result of Google’s continuous improvement action in the way it evaluates content. As we know, core updates can impact the performance of some pages in Google Search even over time, and usually this does not take the form of a sharp drop.

Another factor that could lead to a slow decline in traffic is a disruption or change in search interest: sometimes, changes in user behavior cause a change in demand for certain queries, for example, due to the emergence of a new trend or a major change in the country you are observing. This means that site traffic may decrease simply because of external influences and factors.

3. Seasonality

The third graph identifies the effects of seasonality, which can be caused by many reasons, such as weather, holidays, or vacations.Usually, these events are cyclical and occur at specific regular intervals, that is, on a weekly, monthly, or quarterly basis.

- Glitch

The last example shows reporting problems or glitches: if we see a major change followed immediately by a return to normal, it could be the result of a simple technical problem, and Google usually adds an annotation to the graph to inform us of the situation.

Tips for analyzing the decline pattern

After discovering the decline (and after the first, and inevitable, moments of panic…), we need to try to diagnose its homes and, according to Waisberg, the best way to understand what has happened to our traffic is to examine the Search Console performance report, which summarizes a lot of useful information and gives us a way to see (and analyze) the shape of the variation curve, which in itself gives us some clues.

Specifically, the Googler advises us to expand the date range to include the last 16 months (to view beyond 16 months of data, we need to periodically export and archive the information), so that we can analyze the decline in traffic in context and ensure that it is not related to a regular seasonal event, such as a holiday or trend. Then, for further confirmation, we should compare the fall period with a similar period, such as the same month of the previous year or the same day of the previous week, to make it easier to find what exactly has changed (and preferably trying to compare the same number of days and the same days of the week).

In addition, we need to navigate through all the tabs to find out where the change occurred and, in particular, to understand whether it affected on specific queries, pages, countries, devices, or Search Aspect. Analyzing the different types of search separately will help us understand whether the drop is limited to the Search system or whether it also affects Google Images, Video, or the News tab.

Investigate general trends in the business sector

The emergence of major changes in the industry or country of business may push people to change the way they use Google, that is, to search using different queries or use their devices for different purposes. Also, if we sell a specific brand or product online, there may be a new competing product that cannibalizes search queries.

In short, understanding traffic declines also comes strongly from analyzing general trends for the Web, and Google Trends provides access to “a largely unfiltered sample of actual search queries made to Google,” with insights that are also useful for image search, news, shopping, and YouTube.

In addition, we can also segment the data by country and category so that the data is more relevant to our website’s audience.

From a practical point of view, Waisberg suggests checking the queries that are driving traffic to the website to see if they exhibit noticeable declines at different times of the year: for example, food-related queries are very seasonal, because-in the United States, but not only there-people search for “diets” in January, “turkey” in November, and “champagne” in December, and basically different industries have different levels of seasonality.

Why is my site losing traffic?

The direct question of why a site may be losing organic traffic was also the focus of an interesting Youtube Hangout in which John Mueller answered a user’s doubt, related to a problem plaguing his site: “Since 2017 we are gradually losing rankings in general and also on relevant keywords,” he says, adding that he has done checks on the backlinks received, which in half of the cases come from a single subdomain, and on the content (auto-generated through a tool, which has not been used for a few years).

The user’s question is therefore twofold: first, he asks Mueller’s advice on how to stop the gradual decline in traffic, but also a practical suggestion on whether to use the link disavow to remove all subdomain backlinks, fearing that such an action could cause further effects to ranking.

Identifying the causes of the decline

Mueller starts with the second part of the question, and answers sharply, “If you remove with disavow a significant part of your natural links there can definitely be negative effects on ranking,” once again confirming the weight of links for ranking on Google.

Coming then to the more practical part, and thus to the possible causes of the drop in traffic and positions, the Googler thinks that the proposed content can be good (“if it is extra informative documents regarding your site, products or services you offer”) and therefore there is no need to block or remove it from indexing. Therefore, at first glance, it can be said that “neither links nor content are directly the causes of a site’s decline.”

SEO traffic, the reasons why a site loses ranking

Mueller articulates his answer more extensively, saying that in such cases, “when you’re seeing sort of a gradual decline over a fairly long period of time,” there may be some sort of “natural variation in ranking” that can be driven by five possible reasons.

- Changes in the Web ecosystem.

- Algorithm changes.

- Changes in user searches.

- Changes in users’ expectations of content.

- Gradual changes that do not result from big, dramatic Web site problems.

Google’s ranking system is constantly moving, and there are always those who go up and those who go down (one site’s ranking improvement is another’s ranking loss, inevitably). That being said, we can delve into the causes mentioned in the hangout.

When a site loses traffic and positions

Ecosystem changes, as interpreted by Roger Montti on SearchEngineLand, include situations such as increased competition or the “natural disappearance of backlinks” because linking sites have gone offline or removed the link, and in any case all factors external to the site that can cause loss of ranking and traffic.

Clearer reasoning about algorithm changes, and we know how frequent broad core updates are also becoming, which redefine the meaning of a Web page’s relevance to a search query and its relevance to people’s search intent.

It is precisely the users who are the “key players” in the other two reasons mentioned: we are repeating this almost obsessively, but understanding people’s intentions is the key to making quality content and having a high-performing site, and so it is important to follow query evolutions but also to know the types of content that Google is rewarding (because users like them).

How to revive a site after traffic loss

Mueller also offered some practical pointers for getting out of declining traffic situations: the first suggestion is to roll up our sleeves and look more carefully at the overall issues of the site, looking for areas where significant improvements can be made and trying to make content more relevant to the types of users that represent our target audience.

More generally, Google’s Senior Webmaster Trends Analyst tells us that it is often necessary to take a “second look” at the site and its context, not to stop at what might appear to be the first cause of the decline (in the specific case of the old hangout, backlinks) but to study in depth and evaluate all the possible factors influencing the decline in ranking and traffic.

Algorithms, penalties and quality for Google, how to understand SEO traffic declines

Far more comprehensive and in-depth is the advice that came from Pedro Dias, who worked in the Google Search Quality team between 2006 and 2011 and who in an article published in Search Engine Land walks us through applying the right approach to situations of SEO traffic drops. The starting point of his reasoning is that it is crucial to really understand whether traffic drops due to an update or whether other factors such as manual actions, penalties, etc. intervene, because only by knowing their differences and the consequences they generate-and thus identifying the trigger of the problem-can we react with the right strategy.

Understanding how algorithms and manual actions work

The former Googler premises that his perception may therefore be currently outdated, as things at Google are changing at a more than rapid pace, but, nonetheless, on the strength of his experience working at “the most searched input box on the entire Web,” Dias offers useful and interesting insights into “what makes Google work and how search works, with all its nuts and bolts.”

Specifically, he analyzes “this tiny field of input and the power it wields over the Web and, ultimately, over the lives of those who run Web sites,” and clarifies some concepts, such as penalties, to teach us how to distinguish the various forms and causes by which Google can make a site “crash”

Google’s algorithms are like recipes

“Unsurprisingly, anyone with a Web presence usually holds their breath whenever Google decides to make changes to its organic search results,” writes the former Googler, who then reminds us how “being primarily a software engineering company, Google aims to solve all its problems on a large scale, not least because it would be “virtually impossible to solve the problems Google faces solely with human intervention.”

For Dias, “algorithms are like recipes: a set of detailed instructions in a particular order that aim to complete a specific task or solve a problem.”

Better small algorithms to solve one problem at a time

The probability of an algorithm “producing the expected result is indirectly proportional to the complexity of the task it has to complete”: this means that “most of the time, it is better to have several (small) algorithms that solve a (large) complex problem, breaking it down into simple subtasks, than a giant single algorithm that tries to cover all possibilities.”

As long as there is an input, the expert continues, “an algorithm will run tirelessly, returning what it was programmed to do; the scale at which it operates depends only on available resources, such as storage, processing power, memory and so on.”

These are “quality algorithms, which are often not part of the infrastructure,” but there are also “infrastructure algorithms that make decisions about how content is scanned and stored, for example.” Most search engines “apply quality algorithms only at the time of publication of search results: that is, the results are evaluated only qualitatively, at the time of service.”

Algorithmic updates for Google

At Google’s, quality algorithms “are seen as filters that target good content and look for quality signals throughout Google’s index,” which are often provided at the page level for all websites and can then be combined, producing “scores at the directory or hostname level, for example.”

For Web site owners, SEOs, and digital marketers, in many cases, “the influence of algorithms can be perceived as a penalty, especially when a Web site does not fully meet all quality criteria and Google’s algorithms decide instead to reward other, higher quality Web sites.”

In most of these cases, what ordinary users see is “a drop in organic performance,” which does not necessarily result from the site being “pushed down,” but more likely “because it stopped being rated incorrectly, which can be good or bad.”

What is quality

To understand how these quality algorithms work, we must first understand what quality is, Dias rightly explains: as we often say when talking about quality articles, this value is subjective, it lies “in the eye of the beholder.”

Quality “is a relative measure within the universe in which we live, depending on our knowledge, experience and environment,” and “what is quality for one person, probably may not be quality for another.” We cannot “tie quality to a simple context-free binary process: for example, if I am dying of thirst in the desert, do I care if a water bottle has sand at the bottom?” the author adds.

For websites, it is no different: quality is, fundamentally, delivering “performance over expectations or, in marketing terms, Value Proposition.”

Google’s ratings of quality

If quality is relative, we must try to understand how Google determines what is responsive to its values and what is not.

In fact, says Dias, “Google does not dictate what is and what is not quality: all the algorithms and documentation Google uses for its Webmaster Instructions (which have now merged into the Google Search Essentials guidelines, ed.) are based on real user feedback and data.” It is also for this reason, we might add, that Google employs a team of more than 14 thousand Quality Raters, who are called upon to assess precisely the quality of the search results provided by the algorithms, using as a compass the appropriate Google quality rater guidelines, which also change and are updated frequently (the last time in July 2022).

We also said this when talking about search journey: when “users perform searches and interact with websites on the index, Google analyzes user behavior and often runs multiple recurring tests to make sure it is aligned with their intent and needs.” This process “ensures that the guidelines for websites issued by Google align with what search engine users want, they are not necessarily what Google unilaterally wants.”

Algorithm updates chase users

This is why Google often states that “algorithms are made to chase users,” recalls the article, which then similarly urges sites to “chase users instead of algorithms, so as to be on par with the direction Google is moving in.”

In any case, “to understand and maximize a website’s potential for prominence, we should look at our websites from two different perspectives, Service and Product.”

The website as a service

When we look at a website from a service point of view, we should first analyze all the technical aspects involved, from code to infrastructure: for example, among many other things “how it is designed to work, how technically robust and consistent it is, how it handles the communication process with other servers and services, and then again the integrations and front-end rendering.”

This analysis alone is not enough because “all the technical frills don’t create value where and if value doesn’t exist,” Pedro Dias points out, but at best “add value and make any hidden value shine through.” For this reason, advises the former Googler, “you should work on the technical details, but also consider looking at your Web site from a product perspective.”

The site as a product

When we look at a Web site from a product perspective, “we should aim to understand the experience users have on it and, ultimately, what value we are providing to distinguish ourselves from the competition.”

To make these elements “less ethereal and more tangible,” Dias resorts to a question he asks his clients, “If your site disappeared from the Web today, what would your users miss that they wouldn’t find on any of your competitors‘ Web sites?” It is from the answer that one can tell “whether one can aim to build a sustainable and lasting business strategy on the Web,” and to help us better understand these concepts he provides us with this image-Peter Morville’s User Experience Honeycomb, modified to include a reference to the specific concept of EAT that is part of Google’s Quality Assessor Guidelines.

Most SEO professionals look deeply into the technical aspects of UX such as accessibility, usability, and findability (which are, indeed, about SEO), but tend to leave out the qualitative (more strategic) aspects, such as Utility, Desirability, and Credibility.

At the center of this hive is the Value, which can only be “fully achieved when all other surrounding factors are met“: therefore, “applying this to your web presence means that unless you look at the whole holistic experience, you will miss the main goal of your website, which is to create value for you and your users.”

Quality is not static, but evolving

Complicating matters further is the fact that “quality is a moving target,” not static, and therefore a site that wants to “be perceived as quality must provide value, solve a problem or need” consistently over time. Similarly, Google must also evolve to ensure that it is always providing the highest level of effectiveness in its responses, which is why it is “constantly running tests, pushing quality updates and algorithm improvements.”

As Dias says, “If you start your site and never improve it, over time your competitors will eventually catch up with you, either by improving their site’s technology or by working on the experience and value proposition.” Just as old technology becomes obsolete and deprecated, “over time, innovative experiences also tend to become ordinary and most likely fail to exceed expectations.”

To clarify the concept, the former Googler gives an immediate example: “In 2007, Apple conquered the smartphone market with a touchscreen device, but nowadays, most people will not even consider a phone that does not have a touchscreen, because it has become a given and can no longer be used as a competitive advantage.”

And SEO also cannot be a one-off action: it is not that “after optimizing the site once, it stays optimized permanently,” but “any area that sustains a company has to improve and innovate over time in order to remain competitive.”

Otherwise, “when all this is left to chance or we don’t give the attention needed to make sure all these features are understood by users, that’s when sites start running into organic performance problems.”

Manual actions can complement algorithmic updates

It would be naive “to assume that algorithms are perfect and do everything they’re supposed to do flawlessly,” admits Dias, according to whom the great advantage “of humans in the battle against machines is that we can deal with the unexpected.”

That is, we have “the ability to adapt and understand abnormal situations, and understand why something may be good even though it might seem bad, or vice versa.” That is, “humans can infer context and intention, whereas machines are not so good.”

In the field of software engineering, “when an algorithm detects or misses something it shouldn’t have, it generates what is referred to as a false positive or false negative, respectively”; to apply the right corrections, one needs to identify “the output of false positives or false negatives, a task that is often best done by humans,” and then usually “engineers set a confidence level (threshold) that the machine should consider before requesting human intervention.”

When and why manual action is triggered

Inside Search Quality there are “teams of people who evaluate results and look at websites to make sure the algorithms are working properly, but also to intervene when the machine is wrong or cannot make a decision,” reveals Pedro Dias, who then introduces the figure of the Search Quality Analyst.

The job of this Search Quality Analyst is “to understand what is in front of him, examining the data provided, and making judgments: these judgment calls may be simple, but they are often supervised, approved or rejected by other Analysts globally, in order to minimize human bias.”

This activity frequently results in static actions aimed at (but not limited to):

- Create a data set that can later be used to train algorithms;

- Address specific, impactful situations where algorithms have failed;

- Report to website owners that specific behaviors fall outside the quality guidelines.

These static actions are often referred to as manual actions, and they can be triggered for a wide variety of reasons, although “the most common goal remains countering manipulative intent, which for some reason has successfully exploited a flaw in the quality algorithms.”

The disadvantage of manual actions “is that they are static and not dynamic like algorithms, i.e., the latter run continuously and react to changes on Web sites, based only on repeated scanning or algorithm refinement.” In contrast, “with manual actions the effect will remain for as long as expected (days/months/years) or until a reconsideration request is received and successfully processed.”

The differences between the impact of algorithms and manual actions

Pedro Dias also provides a useful summary mirror comparing algorithms and manual actions:

| Algorithms – Target the value of resurfacing – Large-scale work – Dynamic – Fully automated – Indefinite duration |

Manual Actions – Aim to penalize behavior – They address specific scenarios – Static Manual + Semiautomatic – Defined duration (expiration date) |

Before applying any manual action, a search quality analyst “must consider what he or she is addressing, assess the impact and the desired outcome,” answering questions such as:

- Does the behavior have manipulative intent?

- Is the behavior egregious enough?

- Will the manual action have an impact?

- What changes will the impact produce?

- What am I penalizing (widespread or single behavior)?

As the former Googler explains, these are issues that “must be properly weighed and considered before even considering any manual action.”

What to do in case of manual action

As Google moves “more and more toward algorithmic solutions, leveraging artificial intelligence and machine learning to both improve results and combat spam, manual actions will tend to fade and eventually disappear completely in the long run,” Dias argues.

In any case, if our website has been affected by manual actions, “the first thing you need to do is figure out what behavior triggered it,” referring to “Google’s technical and quality guidelines to evaluate your website against them.”

This is a job that needs to be done calmly and thoroughly, because “this is not the time to let a sense of haste, stress and anxiety take over”; it is important to “gather all the information, clean up errors and problems on the site, fix everything and only then send a request for reconsideration.”

How to recover from manual actions

A widespread but mistaken belief is “that when a site is affected by a manual action and loses traffic and ranking, it will return to the same level once the manual actions are revoked.” That ranking, in fact, is the result (also) of the use of impermissible tools (which caused the penalization), so it makes no sense that after cleaning and revoking the manual action, the site can return exactly where it was before.

However, the expert is keen to point out, “any Web site can be restored from almost any scenario,” and cases where a property is deemed “unrecoverable are extremely rare.” It is important, however, that recovery begin with “a full understanding of what you are dealing with,” with the realization that “manual actions and algorithmic problems can coexist” and that “sometimes, you won’t start to see anything before you prioritize and solve all the problems in the right order.”

In fact, “there is no easy way to explain what to look for and the symptoms of each individual algorithmic problem,” and so “the advice is to think about your value proposition, the problem you are solving or the need you are responding to, not forgetting to ask for feedback from your users, inviting them to express opinions about your business and the experience you provide on your site, or how they expect you to improve.”

Takeaways on algorithmic declines or resulting from manual actions

In conclusion, Pedro Dias leaves us with four pills to summarize his (extensive) contribution to the cause of sites penalized by algorithmic updates or manual actions.

- Rethink your value proposition and competitive advantage, because “having a Web site is not in itself a competitive advantage.”

- Treat your Web site like a product and constantly innovate-“if you don’t move forward, you will be outdone, whereas successful sites continually improve and evolve.”

- Research your users’ needs through User Experience: “the priority is your users, and then Google,” so it’s helpful to “talk and interact with your users and ask their opinions to improve critical areas.”

- Technical SEO is important, but by itself it won’t solve anything: “If your product/content doesn’t have appeal or value, it doesn’t matter how technical and optimized it is, so it’s crucial not to lose the value proposition.”