Google I/O 23: here comes SGE, there is more and more AI in the future of Search

There was huge anticipation for the 15th edition of Google I/O, the event expressly dedicated to developers where, by tradition, the Mountain View giant presents the anticipations and fruits of the labor on all the products and services of the ecosystem. This year, the focus was mainly on the hottest topic of the moment, namely Artificial Intelligence with its applications, and the curiosity about Big G’s moves on this front was considerable.We can say that expectations were not disappointed, because indeed the central theme of Google I/O 2023 was the presentation of artificial intelligence advances to create more useful products and features, starting with a new search engine model, called Search Generative Experience.

Google goes all-in on artificial intelligence in its services and products

The new hardware lineup of the Pixel range, custom wallpapers on Android, more powerful editing tools in Google Photos, but above all many announcements about the applications of the latest AI systems and its various declinations in Big G’s various software: Google I/O 2023 can be summed up in this way, moreover on the heels of what has already been presented with Google I/O 2022 and previous editions.

Indeed, as Google CEO Sundar Pichai claimed in his opening remarks, Google already started “seven years ago on a journey as an AI-first company,” but today it is an exciting turning point and wants to seize the opportunity to “make AI even more useful for people, for companies, for communities, for everyone,” with an application approach to this technology that appears (at least in its intentions and premises) more responsible and shrewd than that of some competitors.

Pichai points out that Google today has “bthat each serve more than half a billion people and businesses, and six of those products each serve more than 2 billion users,” and this offers “so many opportunities to fulfill our timeless mission, which seems more relevant with each passing year: to organize the world’s information and make it universally accessible and useful.” Today, and in the future, making AI useful for everyone is the most profound way to continue that mission, and Google is doing so in four important ways:

- By improving people’s knowledge and learning and helping them deepen their understanding of the world.

- By increasing creativity and productivity so that everyone can express themselves and accomplish their goals.

- By enabling developers and companies to create their own transformative products and services.

- By building and deploying AI responsibly, so that everyone can benefit equally.

The top 10 announcements at Google I/O 2023

This theoretical framework finds deep application in practice, to make Google’s products “radically more useful” through generative AI, which, in practice, helps reinvent all of Google’s core services, including Search, in keeping with a “bold and responsible” approach.

In particular, these ten announcements that came from the May 10, 2023 event (and cited in the company’s official communications as “the most significant”) give us a sense of what the immediate future of Google and some of its key products will look like:

- News in Pixel products: announced Fold (Google’s first foldable phone, capable of transforming into a compact tablet), Google Pixel 7a (which for the first time brings many of the indispensable features of the company’s premium phones to the A-series), and the new Tablet.

- Novelties in the Android ecosystem supported by Artificial Intelligence, starting with personalization and expression.

- Magic Editor in Google Photos, AI editing features to reinvent photos even without professional skills.

- More immersive Maps with AI, particularly with immersive route visualization, showing each segment of a route before you leave.

- Support for writing in Gmail, with a much more powerful generative model that allows you to write an automated response to emails.

- Extension of Bard, Google’s conversational AI, which becomes more global, more visual, more integrated.

- Introduction of PaLM 2, a next-generation language model that has improved multilingual, reasoning, and coding capabilities.

- Fortification of the use of Lens for visual searches. Advances in computer vision have introduced new ways to perform visual searches: now, even if we don’t have the words to describe what we’re looking for, we can search everything we see with Google Lens (already used for more than 12 billion visual searches each month, a 4-fold increase in just two years, Pichai says), thanks to Lens combined with multimodality that has led to multisearch, which allows us to search using both an image and text.

- Tools to identify generated content, through watermarking and metadata: watermarking “embeds information directly into the content in ways that are retained even through modest image editing,” and Google’s model is trained to include watermarks and other techniques from the outset, so as to limit the risks of taking a synthetic image for true. Metadata allows content creators to associate additional context with the original files, providing more information about the image, and Google’s AI-generated images will also contain this metadata.

- Generative AI to enhance Search and reinvent what a search engine can do.

The potential of the new AI model PaLM 2

Of course, we focus our attention primarily on the last point, and then on the applications that promise to transform the Google search experience, and thus may impact the visibility of our sites on the engine itself.

Google’s move was inevitable, given that all the other biggies had already equipped themselves to intercept the latest frontiers of Artificial Intelligence, starting with Microsoft, which boosted its Bing search engine with ChatGPT, but perhaps a real Search “revolution” (at least in words) was not expected.

But let’s go in order: key to this process is the new Pathways Language Model (PaLM 2), which is the core that powers the various services in Google’s ecosystem. Among its main features that demonstrate its consistency and scope are its extension (it supports more than 100 languages), its ability to perform mathematical operations and complex reasoning (thanks to extensive training on scientific and mathematical topics), and the ability to use it to generate code in different programming languages such as Python and JavaScript, but also Prolog, Fortran and Verilog.

Also presented at the event were two subordinate applications of this model, namely Med-PaLM 2 and Sec-PaLM, which are the result of specific training in two rather sensitive areas: the former refers to health, thanks to a specially designed dataset, and will support medical professionals, while the latter specializes in use cases inherent to cybersecurity (e.g., it better detects malicious scripts and can help security experts understand and resolve threats). In addition, Pichai confirmed progress on Gemini, the next-generation model that is currently under development and was created from the ground up to be multimodal, highly efficient in tool and API integrations, and “built to enable future innovations such as memory and scheduling.”

How Search changes with AI

And here is the highlight of Google I/O 2023, the official unveiling of the new search engine, or rather the AI-powered version of Google Search, which is currently called SGE or Search Generative Experience, the focus of several speeches at the event – in addition to Pichai, Elizabeth Reid, Vice President & GM of Search, spoke at length about it – and also a specific document shared by the company.

For decades, artificial intelligence has helped Google Search, enabling it to reimagine the way people interact with and discover information, improve the quality and relevance of results, and support a healthy, open web. Indeed, they recall (and claim) from Mountain View, one of the first applications of machine learning in a Google product “was our first spelling correction system in 2001, over two decades ago,” which helped people get relevant results faster, regardless of spelling errors, misspellings or typos.

In recent years, advances in artificial intelligence have greatly improved Search: in 2019, Google introduced Bidirectional Encoder Representations from Transformers (BERT) in search ranking, achieving a huge change in search quality. Instead of aiming to understand words individually, BERT supports Search to understand words in the context in which they were used, enabling people to make longer and more conversational queries, connecting with more relevant and useful results.

Since that time, Google has applied even more powerful large language models (LLMs) to Search, such as the Multitask Unified Model (MUM) – a multimodal model 1,000 times more powerful than BERT, trained on 75 different languages and for many different tasks; for example, it has been used in dozens of search functions to improve quality and help (engine and users) understand and organize information in new ways, such as finding related topics in videos, even when those topics are not explicitly mentioned.

Still, this has only scratched the surface of what is possible with generative AI, and now Google is testing the possibilities through our new Search Labs program, of which SGE (Search Generative Experience) is the first experiment open for testing.

The Google of the future: here comes SGE, Search Generative Experience

In the simplest definition, SGE is a first step toward transforming the search experience with generative artificial intelligence. Using SGE, people will “notice their search results page with already familiar web results organized in a new way to help them get more out of a single search.”

Specifically, with SGE, people will be able to:

- Ask completely new types of questions they never thought Search could answer.

- Quickly get information about a topic, with links to relevant results to explore further,

- Ask follow-up questions naturally in a new conversational mode.

- Getting more things done easily, such as generating creative ideas and drafts directly in Search.

Important information, the document makes clear that “SGE is rooted in the foundations of Search, so it will continue to connect people to the richness and vibrancy of web content and strive for the highest levels of information quality.”

How the new Google SGE works

We can read (and catch glimpses of) other previews of this new Google-branded search experience.

For example, one of the features of SGE will be the ability to provide AI-powered snapshots that are useful in helping people quickly gain insight, with factors to consider and a useful summary of relevant information and insights.

These snapshots serve as a starting point from which people can explore a wide range of content and perspectives on the Web: SGE will display links to resources that corroborate the information contained in the snapshot, thus enabling people to verify the information themselves and delve deeper. This will allow people to drill down and discover a diverse range of content, from publishers, creators, retailers, companies, and more, and use the information found to further their own activities.

Another relevant skill is the conversational mode (reminiscent of Bing’s…): people can tap to “ask for a follow-up” or to continue on the next steps suggested under the snapshot, thus initiating the feature that allows them to naturally ask Google questions about the topic they are exploring. In addition to the concise information generated by artificial intelligence, people will be able to go into greater depth through links to resources to explore.

Conversational mode is particularly useful for in-depth questions and more complex or evolving information paths; it uses artificial intelligence to understand when a person is looking for something related to a previous question and transfers context to rephrase the question to better reflect intent. In this feature, people will see their links under SGE change during the in the course of the conversation, so they can easily explore the most relevant content on the web.

The third aspect flagged by the paper are vertical experiences, or information journeys linked to verticals, such as shopping or local searches, which often have multiple angles or dimensions to explore. In shopping, for example, SGE helps uncover insights so that people can make thoughtful and complex purchase decisions more easily and quickly. Specifically, for product searches SGE generates a snapshot of noteworthy factors to consider and a range of product options, and also presents product descriptions that include relevant and up-to-date reviews, ratings, prices, and product images. This feature is possible because SGE is based on Google’s Shopping Graph, the world’s most comprehensive and ever-evolving database of products, sellers, brands, reviews and inventory-which contains more than 35 billion product listings, with more than 1.8 billion listings are updated every hour, up sharply from last year’s presentation.

Similarly, SGE will provide context on local places, using artificial intelligence-based suggestions that facilitate comparison and exploration of options.

A great deal of attention has also been paid to the advertising aspect, and Google clarifies (in case there was ever a need) that “search ads will continue to play a key role,” also serving as additional sources of useful information, as well as helping people discover millions of businesses online. Therefore, even with SGE, search ads will continue to appear in dedicated ad spaces within the SERP (with a label that makes the ads distinguishable from organic search results) and advertisers will continue to have the opportunity to reach potential customers along their search path.

These new generative artificial intelligence features can help people continue their journeys in more creative ways, going beyond simply searching for information to instead enabling practical use. For Google, this means, for example, that a simple information journey – such as searching for a new ebike – can easily land on supporting the writing of the perfect social media post in which we showcase it, although in the initial phase these creative insights will be limited because Google has intentionally placed a greater emphasis on safety and quality.

Fundamentally, however, the commitment to maximum user experience remains: for many years “we’ve been evolving Search’s user interface (UI) to make it more useful and accessible,” say Mountain View, and to bring the power of artificial intelligence into Search in a user-friendly way “we built SGE as an integrated experience, applying what we learned about user behavior.” AI-powered snapshots have easy-to-access resources and a recognizable user interface for links that allow for deeper exploration on both desktop and mobile, to bring the power of generative AI directly into Google Search. In addition, users can switch to conversational mode with carefully crafted callbacks and highlighted states that show how to use this new paradigm. Color also plays an important role in helping people clearly understand that SGE is a new way to interact with search: for example, the snapshot color box will dynamically change and the use of color will evolve to better reflect specific trip types and the intent of the query itself.

The technical information about AI in Google

Currently, SGE is powered by a number of LLMs, including an advanced version of MUM and PaLM2, so as to further optimize and refine the models to meet the specific needs of users and help them on their information journey, Google explain.

Although SGE also applies LLM, it has been trained specifically to perform search-specific tasks, including identifying high-quality web results that corroborate the information presented in the output-these models are used in tandem with basic ranking systems to provide useful and reliable results. By limiting SGEs to these specific tasks, including corroboration, Google believes it will be able to significantly mitigate some of the known limitations of LLMs, such as hallucination or inaccuracies, by using existing search quality systems and the ability to identify and rank reliable, high-quality information for this purpose.

Speaking of checks, then, the official document specifies that Google has set up a set of criteria and processes to develop this product responsibly, also relying on human input and evaluation. For example, among the various parameters analyzed to verify SGE outputs are attributes such as length, format and clarity, which are in addition to what is already currently used to ensure the quality of Search. What this means, in particular, is that Search Quality Raters are called upon to measure the quality of the output and results displayed in SGE as well: their ratings do not directly impact SGE output (just as they do not affect Search rankings), but serve to train LLMs and improve the overall experience. In addition, following the process already used for meaningful launches in Search, Google analyzes results on multiple sets of broad and representative queries, as well as conducts more targeted studies to confirm that answers meet quality thresholds. This is particularly true for subject areas that may be more prone to known quality risks (security or inclusion issues), or that are more complex and nuanced, thus requiring more effective protections and responses.

In this regard, Google further specifies that it intends to maintain high standards with regard to the information provided, which must be reliable, useful, and of high quality: therefore, it has built a customized generative AI integration into Search rooted in Search’s classification and quality systems (perfected over decades) and developed a careful and rigorous evaluation process to ensure that each update maintains the quality of the information provided at a high level.

Just as ranking systems are designed not to unexpectedly shock or offend people with potentially harmful, hate-inciting, or explicit content, SGE is designed not to show such content in its responses. It also holds itself to even higher standards when it comes to generating responses on certain queries for which quality of information is paramount, namely “Your Money or Your Life” (YMYL) topics such as finance, health, or civic information, areas where people demand an even higher degree of trust in the results. As with research, for YMYL topics SGE places even more emphasis on producing informative responses corroborated by reliable sources; in addition, the model has been trained to include disclaimers in its results where appropriate.

For example, for health-related questions where an answer is shown, the model emphasizes that people should not rely on the information as if it were medical advice, and that they should turn to professionals in the field for personalized care.

Then there are some topics for which SGE is designed not to generate a response, particularly when there is a lack of quality or reliable information on the web about them. For these areas-sometimes referred to as “data voids” or “information gaps”-where systems have less confidence in search engine answers, SGE is proposed not to generate a snapshot, and this is also true for explicit or dangerous topics and for queries that indicate a vulnerable situation, such as self-harm queries, where they will automatically emerge at the top of the search for reliable resources leading to appropriate hotlines.

To complement the technical information, Google shared two other peculiar aspects of SGE: first, it has intentionally trained SGE to refrain from reflecting a person – even though LLMs have the ability to generate responses that seem to reflect opinions or emotions as if they were part of a human experience – and for this reason it is not designed to respond in the first person and presents objective and neutral responses, corroborated by web results.

Similarly, another intentional choice concerns the fluidity of responses in SGE, both in AI-powered snapshots and in the conversational mode: again, Google has taken a “neutral” approach, limiting the leeway given to models to create fluid, human responses, which often results in a greater likelihood of inaccuracies in the output. At the same time, it has been found that fluid, conversational responses are more “reliable” for human evaluators, who are therefore less likely to catch errors. Therefore, in order to ensure people’s trust in Search, Google decided to limit SGE’s conversationality, which therefore cannot be a “creative and free brainstorming partner,” but a “more factual tool, with pointers to relevant resources.”

SGE’s current known limitations

Despite the safeguards applied, there remain “known limitations” to both LLM in general and SGE, at least in its initial experimental form. Google highlights in particular the five main problems (loss patterns) that emerged during evaluations and adversarial automatic pprendimento, anticipating, however, that updates to the model and upcoming developments should allow progress to be made in this regard.

- Poor interpretation during corroboration. Cases are reported where SGE appropriately identified information to corroborate its snapshot, but with slight errors in language interpretation changing the meaning of the output.

- Hallucination. Like all LLM-based experiences, SGE can sometimes misrepresent facts or inaccurately identify insights.

- Bias. Because it is trained to corroborate responses with high-quality resources-which are then ranked high on Google-SGE may show a snapshot that reflects a narrower range of perspectives than what is available on the web, thus reflecting biases and distortions in those results. This might give the impression that the model has learned the biases, but it is more likely that it is providing results that reflect the biases contained within the first results. In fact, this is already happening in current search results, Google admits: for example, influential organizations and media outlets often do not add the qualifier “male” when writing about men’s sports, and generic queries about that sport may therefore be biased toward male players or teams, even though information about female players or teams is an equally or perhaps even more accurate answer to the user’s query.

- Opinion content involving a person. Although, as mentioned, SGE is designed to reflect a neutral and objective tone in its generative results, there may be instances where the output reflects opinions that exist on the Web, giving the impression that the model displays a personality.

- Duplication or contradiction with existing search functions. Being integrated into Search along with other results and features on the search results page, it is possible for the results of appear to contradict other information in the results. For example, one might see a featured snippet highlighting the perspective of a single source, while SGE represents a synthesized and corroborated perspective in a series of results.

The practical examples on how SGE works

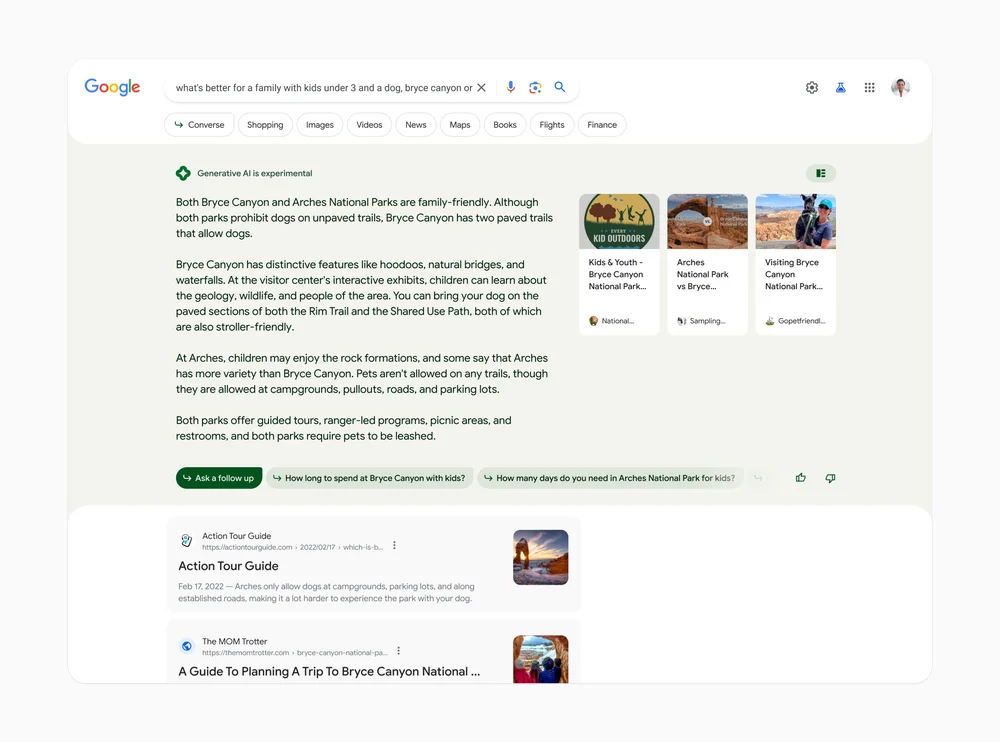

In her talk, Elizabeth Reid showed a practical application of Search Generative Experience starting with a rather complex query, namely “what is best for a family with children under 3 and a dog, Bryce Canyon or Arches” (names of two U.S. national parks). Normally, we would have to break this query down into smaller questions, sort through the vast information available, and start putting things together on our own: with generative AI, Search can do some of this heavy lifting for us and on our behalf.

We will directly display an AI-based snapshot of key information to consider, with links to dig deeper (as seen in the image shared by Barry Schwartz). Below this snapshot, there are a series of suggested next steps, including the ability to ask follow-up questions, such as “How much time do you spend at Bryce Canyon with the kids?”; if tapped, they take us into a new conversation mode, where we can ask Google more about the topic we are exploring. Context will be transferred from question to question to help us continue the exploration in a more natural way, with presentation also of useful starting points for web content and a range of perspectives for other insights.

What does SGE mean for SEO?

We throw the question out provocatively, because at the moment it is too early to even speculate on the possible impacts of the new search experience on SEO and site traffic-as mentioned, SGE is only available in testing in the U.S. by signing up for Google’s Labs program-but in fact the international community is already buzzing.

The first reaction oscillates between curiosity and concern: many have seen in the Search Generative Experience snapshots a kind of rather pushy evolution of featured snippets, with longer information, presented in a conversational tone and manner, and with links to related content, including transactional content.

And it is precisely the presence of in-depth links to other Web resources that has calmed the spirits of many analysts, especially since early tests on Bard showed a total absence of references and citations of sources.

However, there is no shortage of those who are predicting yet another death of SEO and the end of organic traffic to sites, with Google once again being portrayed as a “cannibal” that gobbles up all visits and all users’ online time, offering them a complete and closed experience, summarized in the “zero-click searches” formula, the infamous zero-click searches that Google would be guilty of.

To be sure, Google SGE appears to be a different search experience from what we have seen on Google so far, although it retains some basic features of Google Search, and we can only wait for its actual debut to understand more about it and to find out if, how, and how much it will revolutionize the way we do searches and, no less collaterally, SEO and our work as well.