At Google I/O 2022 the latest news on the search system (and SEO)

Many, many announcements related to the boundless fields in which Big G’s presence is felt – the famous Google ecosystem, which covers practically every aspect of life, digital and otherwise, as we can see in this list of 100 things announced! – but nothing particularly surprising or revolutionary for the search system and hence for SEO. As the curtain has come down on Google I/O 2022, we can take an overview of this year’s event and the possible new things that will be coming to the search engine, so that we can possibly prepare ourselves and our plans to intercept them.

Google I/O 2022, Sundar Pichai’s speech

Perhaps it was impossible that for the search engine there would already be a further revolution after the one anticipated at Google I/O 2021, when the revelation of Google MUM and of the applications of artificial intelligence systems (also) to the Search system drew all the spotlights on Big G’s most famous product, but probably no one expected so little actual presence of Search within the event dedicated to webmasters and web developers that represents a real showcase for the Californian company (and that owes its name to the initials of Input and Output).

In any case, there was no shortage of points of interest within Google I/O 2022, which concern (again) the applications of technology to Search in order to be able to guarantee what, as Google and Alphabet CEO Sundar Pichai confirms, remains Google’s fundamental mission, today as about 24 years ago: ‘to organise the world’s information and make it universally accessible and useful’.

Information and knowledge at the heart of the process

Technology is playing a central role in driving Mountain View towards this goal and ‘the progress we’ve made is due to our years of investment in advanced technologies, from AI to the technical infrastructure that powers everything,’ Pichai says in his introductory speech.

There are two key ways in which the company is advancing ‘this mission: by deepening our understanding of information, so we can turn it into knowledge, and improving the state of computing, so that knowledge is easier to access, no matter who you are or where you are’.

Pichai then goes on to recount some of the latest achievements of the search engine, which ‘during the pandemic, has focused on, among other things, providing accurate information to help people stay healthy’ (and in the past year, people have used Google Search and Maps nearly two billion times to find a place where they could get a vaccine against COVID), expanded ‘flood forecasting technology to help people stay safe in the face of natural disasters’ (and during last year’s monsoon season, these flood warnings alerted more than 23 million people in India and Bangladesh, supporting the timely evacuation of hundreds of thousands of people), collaborated with the government in Ukraine ‘to rapidly deploy air raid warnings’ (sending hundreds of millions of warnings to help people get to safety).

Whati is coming for Search

Pichai also quickly presented some of the innovative features and upgrades that are coming to Google Search globally; in particular, the company’s CEO mentioned the addition of 24 more languages to Google Translate (the real-time translation system that ‘is a testament to how knowledge and information technology come together to improve people’s lives’) and the ongoing improvements to conversational AI.

This is version 2.0 of Google LaMDA, the generative language model for conversational applications capable of conversing on any topic, which thus becomes ‘our most advanced conversational AI ever’.

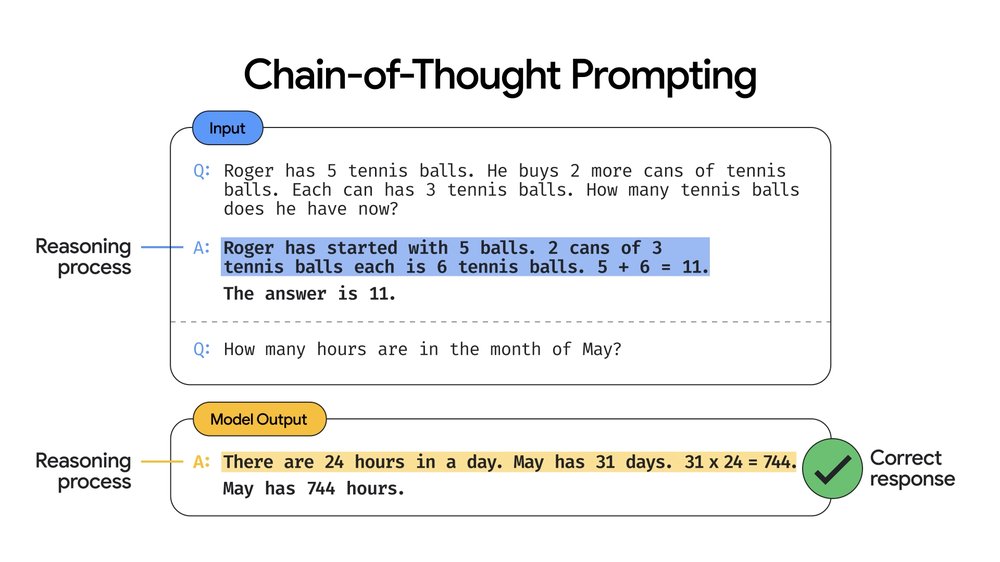

Joining LaMDA 2 is now Pathways Language Model, or PaLM for short, a new technology model designed to explore other aspects of natural language processing and AI: it is Google’s largest model to date, trained on 540 billion parameters, which according to Pichai “demonstrates revolutionary performance on many natural language processing tasks, such as generating code from text, answering a mathematical word problem, or even explaining a joke” thanks to a technique called chain-of-thought prompting, which allows multi-step problems to be described as a series of intermediate steps, and increases the accuracy of the answer by a wide margin.

Lastly (always limiting ourselves to applications on Search and the possible effects on SEO), the Google CEO anticipates the increasingly intense use of Augmented Reality also through the Google Lens app which, according to data revealed during the event, is now used more than 8 billion times a month worldwide, a figure practically triple that of last year.

Multisearch, the new system for searching around us

And it is precisely through Lens that Multisearch works, which is perhaps the most important announcement for the search segment that came at Google I/O 2022 (although, in reality, the system has already been presented in recent weeks and a further update was mentioned at the event).

Multisearch is a special search function that, through the use of the Lens app and the smartphone’s camera, allows searches to be performed from the framed image, while adding an additional text query to refine the search. Google will then use both the image and the text query to show the results of the visual search.

With Multisearch Near Me there is an extra step: it will be possible to zoom in on those image and text queries searching for products or other things via the camera, or local results – for instance, to find a restaurant offering a specific dish.

From an SEO perspective, this feature represents a new way in which users/consumers can come into contact with our content: in addition to classic desktop search results, mobile searches, voice searches, and image searches, we now also have to ‘care’ about multi-search, which also confirms that visual resources are already central to intercepting people and driving them to click – and this is now even more important for local businesses.

Integrated image and text searches also for local searches

According to Prabhakar Raghavan, the company’s Senior Vice President, with Multisearch ‘we’re redefining Google Search once again, combining our understanding of all types of information (text, voice, visual and more), so that you can find useful information about everything you see, hear and experience, in the most intuitive ways’.

Raghavan Raghavan briefly describes the possible applications of this system which, in its Near Me version, will be available globally by the end of the year in English and will expand to more languages over time: ‘You can use an image or screenshot and add ‘near me’ to see the options of restaurants or local retailers that have the clothing, household products, and food you are looking for’. For example, he continues, “suppose you see a colourful dish online that you’d like to try, but you don’t know what’s in it or what it’s called: when you use Multisearch to find it near you, Google scans millions of images and reviews posted on web pages and by our community of Maps contributors to find results on nearby places that offer the dish so you can enjoy it yourself”.

And soon there will be a further update, called ‘scene exploration‘, which will improve Google’s visual search by recognising not only the objects captured in a single frame but, indeed, the entire scene. Thanks to the use of Multisearch multiple search, we will be able to pan using the smartphone’s camera and instantly obtain information on multiple objects in this wide scene.

This scene exploration ‘is a powerful step forward in our devices’ ability to understand the world the way we do’ and will allow people to ‘easily find what they are looking for’, concludes Google’s Senior Vice President.

Applications announced for Search (and for SEO)

Moving on to more practical matters, let us see what new developments directly affect the world’s most widely used search engine.

The first announcement concerns the delicate issue of privacy: Google is about to introduce a specific tool in Search to manage one’s online presence and help each person easily control ‘whether your personally identifiable information can be found in search results, so you can be more comfortable about your online fingerprint’, clarifies Danielle Romain, VP Trust. This tool is necessary because “for many people, a key element in feeling safer and more private online is having more control over where their sensitive and personally identifiable information can be found”.

This tool will make the process of removing Google results that contain personal contact information (such as phone number, home address or email address) even easier, and will be available in the Google app or by clicking on the three dots next to individual Google Search results. When Google receives the report with a request for removal, it will evaluate all the contents of the webpage to verify the case and not restrict the availability of other widely useful information (e.g. in news articles) and ‘of course, removing contact information from Google Search does not remove it from the Web, which is why it might be useful to contact the hosting site directly if you feel comfortable doing so,’ Romain suggests.

“Another important part of online safety is trusting the information you find,” says Nidhi Hebbar, Product Manager at Google, in presenting a further upgrade of the About This Result function: launched in February 2021, this tool is available in English on individual search results and serves to help users discover the general context of a website before they even visit it.

While waiting for the extension to other languages – which should arrive by the end of the year, says Hebbar – the function has been used more than 1.6 billion times and is now landing in other parts of the ecosystem. Specifically, when the user views a web page on the Google app, with a simple tap he will be able to see a tab with information about the source, including a brief description, what the site says about himself, and what others’ opinions are on the web, so that each person has ‘the tools to evaluate information wherever he is online, not just on the search results page’.

In practice, as the gif shows, with this update we will be able to find useful context on a source even if we are already on the website and on any website.

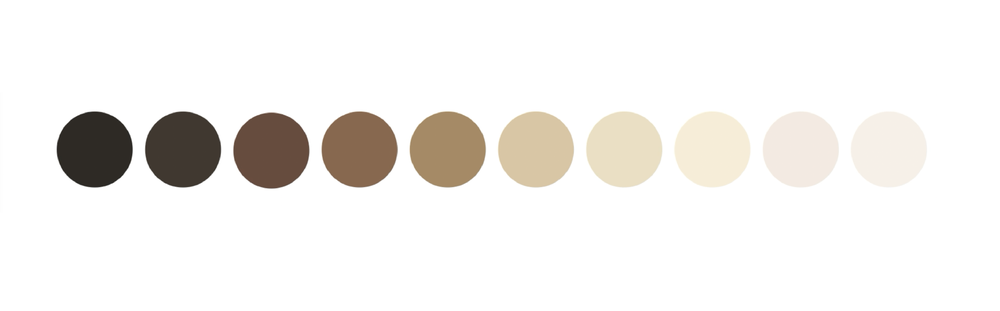

Also of interest is Search’s new Skin Tone Scale, designed together with Dr. Ellis Monk (Harvard professor and sociologist) to make it more inclusive of the actual spectrum of skin tones we see in our society. The new tool is called the Monk Skin Tone Scale and is in fact a 10-tone scale that will be incorporated into various Google products in the coming months, with the goal of helping Big G “and the tech industry at large create more representative datasets so that we can train and evaluate AI models for equity, resulting in features and products that work better for everyone, of all skin tones,” as Tulsee Doshi, Head of Product Responsible AI, explains.

In particular, the scale will be used by Google to evaluate and improve the models that detect faces in images, but also to ‘make it easier for people of all backgrounds to find more relevant and useful results‘ in Search and to improve Google Photos (with special filters designed to work well on all skin tones and evaluated using the MST scale).

Let us then close with an announcement of an even more functional nature: soon, in the Coverage (Index) section of Google Search Console, there will be a video page indexing report, which will show a summary of all video pages found by Google during the crawling and indexing of the website, with the classic information concerning the status of the pages and the presence of any errors.