Artificial Intelligence and Google: AI applications in Search

It was 2015 when Google announced the introduction of the first artificial intelligence system applied to Search, namely RankBrain, and in recent years the technological work has continued, mainly to improve the understanding of the language and, Consequently, provide in SERP search results closer to the real needs of people, as we told a few days ago presenting the latest developments of LaMDA. Now it is Google that takes stock of the state of this evolution and share information on how AI applied to Research “translates into relevant results”, and therefore where and how such systems are really used.

Artificial Intelligence in Google Search

It is directly Pandu Nayak, Google Fellow and Vice President of Search, to describe in an in-depth article on The Keyword “how AI provides excellent search results”helping Google understand what the user is looking for by improving their understanding of the language.

Before 2015 and before having advanced artificial intelligence, Google’s systems “simply looked for the corresponding words”, recalls Nayak, who in particular mentions the case of misspelling: looking for pziza “unless there was a page with that particular spelling error, we probably would have to repeat the search with the correct spelling to find the information we were interested in”. Over time, however, Google has learned to encode algorithms to find classes of models, such as popular spelling errors or potential typing errors from nearby keys and now, with advanced machine learning, its systems can recognize in a more intuitive way if a word does not seem correct and suggest a possible correction.

These AI enhancements to research systems allow you to constantly refine the understanding of what the user is looking for, a determining factor in light of the fact that “the world and people’s curiosities are constantly evolving”, with “15% of the searches we see every day that are completely new”.

The real applications of the AI systems

During the same days an article by Barry Schwartz dedicated to this topic also appeared, reporting the information provided by the public liaison Danny Sullivan, which reveals that – in summary – there are four technologies currently in operation in the Research system.

To be precise, RankBrain, neural matching, and BERT are used in Google’s ranking system in many, if not most, queries and try to understand the language of the query and the content it is classifying. And then there is MUM, which for now is not used for classification purposes, but only for the naming of COVID vaccines and to feed the related topics in the video results.

What are the AI applications currently in use on Google

As Nayak explains, we must not think that a more modern technology necessarily retires the previous one: Google has developed hundreds of algorithms over the years always with the aim of providing relevant search results, and today uses hundreds of algorithms and machine learning models that work well together, old and new. This is because “each algorithm and model has a specialized role and is activated at different times and in distinct combinations to help deliver the most useful results”, although inevitably some of the more advanced systems play a more important role than others, like the 4 ones mentioned in Article:

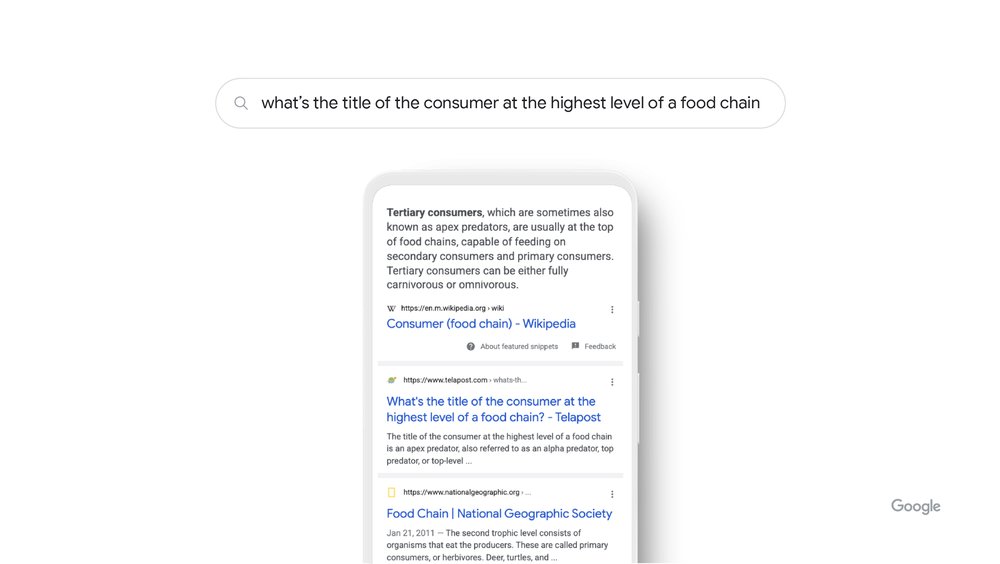

- RankBrain: a smarter classification system

It starts as mentioned by RankBrain, the first deep learning system applied to research. At that time, Nayak says, “it was revolutionary not only because it was our first system of artificial intelligence, but because it helped us understand how words relate to the concepts of the real world“, which for humans is an instinctive fact, but for a computer it is a complex challenge.

To explain the practical operation of this system (which can take a broad query and better define how that query connects to real-world concepts) Nayak makes the example of research “what is the title of the consumer at the highest level of a food chain“: Google’s systems learn by seeing on various pages those words that the concept of the food chain can have to do with animals, and not with human consumers; understanding and matching these words to their related concepts, Rankbrain understands that the user was looking for what is commonly called an “apical predator” and thus provides the most relevant result.

As the name suggests, RankBrain serves to rank or decide the best order for the best search results and, despite old age, “continues to be one of the leading artificial intelligence systems that power Research today”, used in many queries and in all languages and regions.

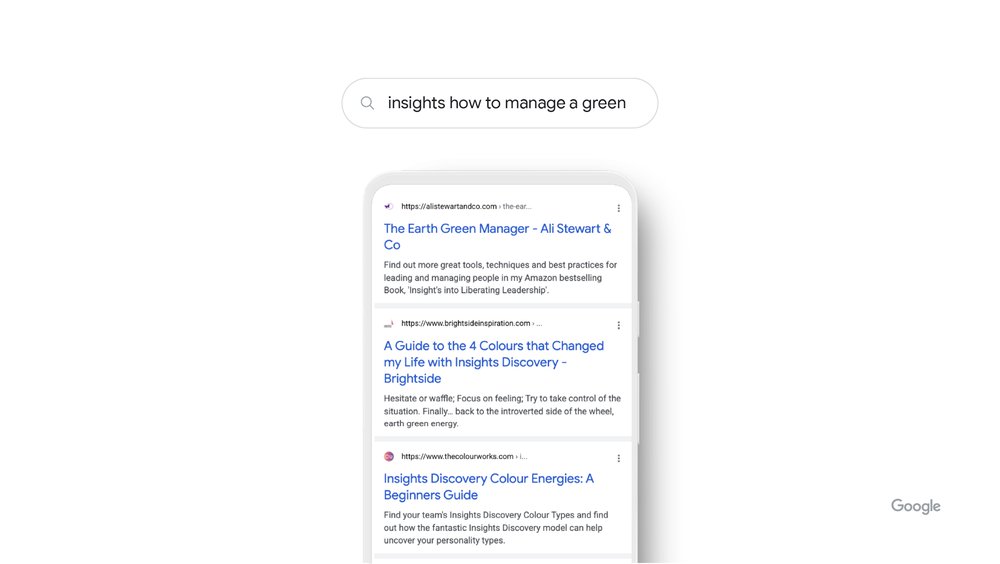

- Neural matching: a sophisticated recovery engine

Neural matching – was the next AI released by Google for research, tested initially in 2018 and then extended to local search results in 2019. Today neural networks are today the basis of many modern artificial intelligence systems, and Google had already glimpsed the potential to refine the understanding of how queries relate to pages, evaluating even more fuzzy representations of concepts in queries and pages.

Specifically, neural matching helps Google understand how queries relate to pages by examining the entire query or page content (and not just the keyword string) in the context of that page or query, offering Google a broader understanding of the concepts and thus expanding the amount of content that Google is able to search for.

The clarifying example is “insights how to manage a green“, which might sound strange even for a human being: thanks to neural matching, however, Google has observed broader representations of concepts in the query – management, leadership, personality and more – and deciphered that the user is looking for management guidance related to a popular color-based personality guide.

Today, neural matching is part of the ranking algorithm, used in many queries – if not most – for all languages, in all regions and in most industries, and specifically helps Google rank search results: Increased understanding of the broader concepts represented in a query or page helps “create a wide network when we scan our index for content that may be relevant to your query”, and so correspondence plays a key role in the way Google retrieves relevant documents from a huge, ever-changing flow of information.

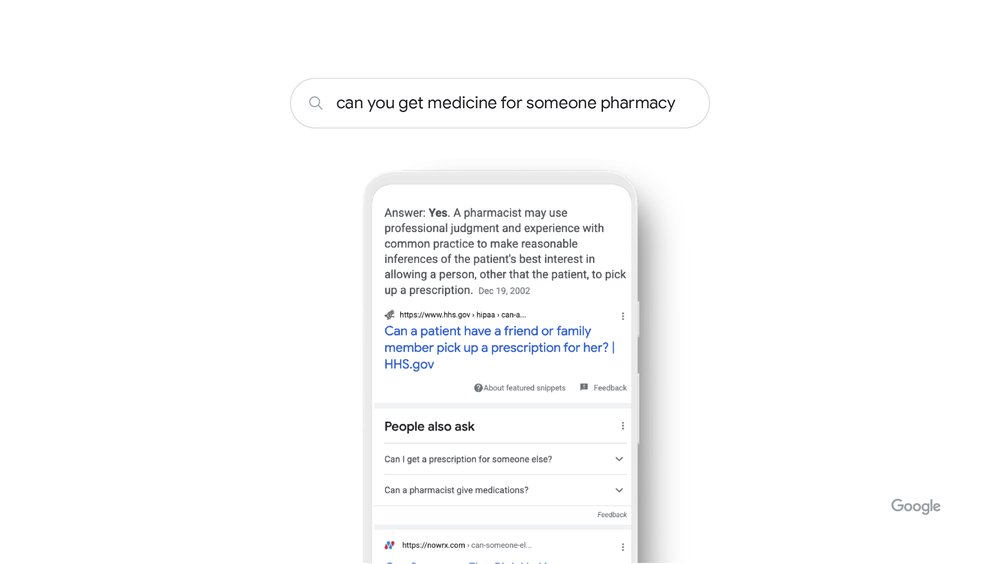

- BERT — a model for understanding meaning and context

2019 saw the debut of Bidirectional Encoder Representations form Transformers, or simply BERT, a technology based on the neural network for the pre-training of natural language processing which has represented a huge change in the understanding of natural language, helping Google to understand how word combinations express different meanings and intentions.

Instead of simply looking for content that matches individual words, BERT understands how a combination of words expresses a complex idea, different meanings and intentions, understands words in a sequence, and how they relate to each other, taking into consideration all the words from the query, even those apparently small, but that “can have great meanings” – in practice, allows Google to understand how words can change the meaning of the queries if used in a particular sequence.

By launching the query “can you get medicine for someone pharmacy“, BERT understands that we are trying to figure out if we can take medicine for someone else; before this technology, Instead, Google ignored the preposition and merely shared for the most part the results on how to fill out a prescription (how to get medicine).

At the time of its launch, BERT was used in 10% of all queries in English, but was expanded to more languages and soon used in almost all queries in English; today it is used in most queries and is supported in all languages.

According to Nayak, BERT excels “in two of the most important tasks in delivering relevant results: ranking and recovery“. Based on its complex understanding of the language, BERT can rank documents very quickly by relevance, and its training has improved legacy systems, making them more useful in retrieving documents relevant to the ranking. Yet, BERT never works alone, but like all other systems, it is part of a set of technologies that work together to share high-quality results.

- MUM: from language to understanding information

More recent is the introduction of Google MUM, technology announced during last May Google I/O 2021 and the latest milestone of AI in Search: a thousand times more powerful than BERT, MUM is able to understand and generate language (so it can be used to understand the variations of new terms and languages), is trained in 75 languages and for many different tasks simultaneously, and this allows you to develop a more complete understanding of the information and knowledge of the world. MUM is also multimodal, meaning it can include information in multiple modes such as text, images and more in the future.

Compared to the previous cases, Nayak points out that MUM is not used for ranking purposes at this time in Google Search, and therefore does not serve to classify and improve the quality of search results as they do rankbrain, Neural matching and BERT systems.

However, this technology supports all languages and regions, and Google has tested some of its potential to improve information searches on COVID-19 vaccines and generate tips on related topics in video results. A possible future application anticipated by the article is “to offer more intuitive ways to search using a combination of text and images in Google Lens”, and the progressive use of MUM-based experiences in Search will allow you to “moving from an advanced linguistic understanding to a more complex and articulated understanding of world information”.

Artificial intelligences and Google updates

These are the main uses that Google makes of the artificial intelligence systems applied to its SERPs, which serve primarily to “understand the language, including the query and the potentially relevant results” and “are not designed to act in isolation to analyze only a query or the content of a page”, but precisely to have a general understanding of both aspects.

And then there are the core updates, as Schwart reminds us, the periodic updates that serve to ensure that the results provided by the search engine are always relevant and quality: as revealed by Google, in addition to the three major mechanisms of AI applied to Search (RankBrain, neural matching and BERT) there are in fact “there are other elements of artificial intelligence that can affect the core update” on aspects that do not affect the activity of these three systems, and therefore there are simultaneously operating different types of AI.

In addition, there is a world outside of Search – local research, images, shopping and other verticals of the Big G ecosystem – where other types of technologies, separate and specialized, are used.

What these AI systems mean for the SEO

Knowing that artificial intelligence is increasingly central to Google and Search should not scare us about the possible future of the SEO – and not just because already in unsuspecting times John Mueller reassured us that “SEO will not become obsolete despite AI and machine learning”.

These systems, in fact, serve mainly to improve the way in which Google itself understands the queries and the contents of the sites for a perfect matching of the user intent, and ultimately approaches the search engine to speak our own language. This should actually help our work in content creation, recalling the first practical advice for SEO copywriting, which is to “write for humans”: if we intercept the search intent that underlies the query and if people understand what we write, the algorithms and artificial intelligence will also understand it and reward the pages with the right ranking.

And so, even if there are no SEO optimization techniques for BERT or other AI, all the classic work that we do to improve our sites and our pages, both in terms of content and on the technical front, is still worth.