EEAT in the Google-AI era: today, the game is played on trust

Write better. Show who you are. Cite reliable sources. For years, you’ve viewed EEAT as a simple editorial criterion, but in reality, it’s Google’s most successful semantic packaging strategy. A reassuring acronym created to give a name to thousands of complex technical signals that would otherwise remain indecipherable.

Today, search has changed, and the evaluation criteria have shifted. Artificial intelligence has imposed a new validation protocol: generative engines and systems like AI Overview must decide whether your knowledge is reliable enough to power synthetic responses. Signals similar to EEAT become the filter that prevents machines from hallucinating and drawing on unreliable data. If your content doesn’t convey truth, external recognition, thematic consistency, and verifiable relationships, AI won’t select you as a source for synthetic answers, downgrading you to noise to be ignored. Before, the goal was to secure a ranking; today, you work to achieve visibility in the answers.

What is EEAT

EEAT is the evaluation system described by Google to assess the quality, reliability, and relevance of content in its index.

Originating within the Search Quality Rater Guidelines — the operational document intended for human raters who analyze samples of results to provide qualitative feedback to the search engine — the acronym identifies four key parameters: Experience, Expertise, Authoritativeness, and Trustworthiness, which serve to distinguish an authoritative source from generic or potentially harmful content.

It is essential to clarify a methodological point: EEAT is not a standalone algorithm, a direct ranking factor, or a numerical score assigned to pages. The work of quality raters does not influence a single site’s ranking in real time, but it produces the data needed to train and calibrate automated systems. Yet it is clear that Google systematically prioritizes results that demonstrate strong signals of reliability, and the most recent studies confirm that EEAT factors and your brand’s weight are the key differentiators for reaching the top of Google and staying there in the era of generative search.

The framework acts as a model that guides the interpretation of quality, translating into signals distributed across content, reputation, and technical structure. Furthermore, today EEAT has ceased to be mere textbook material for reviewers and has also become the validation interface for generative search systems and answer engines, which use similar signals to reduce the risk of hallucinations and select sources to cite.

What EEAT Means for Google (and for Your Brand)

Google does not possess a sensor for wisdom or truth—which are human and subjective values—and therefore uses technical alternatives to estimate authority, what are known in statistics and computer science as proxies (a measurable variable that substitutes for one that cannot be observed directly).

The bridge between human perception of quality and its algorithmic measurement is built by the work of quality raters, who use the EEAT framework to produce qualitative judgments that feed into the calibration processes of automated systems. Through this systematic analysis, Google identifies recurring patterns in sources deemed reliable and transforms them into a reference framework for interpreting the credibility of a page, an author, and a site as a whole. This is a model that operates at a systemic level and translates into a network of signals distributed across content, reputation, and infrastructure.

The algorithm analyzes quantitative data to validate this network: the persistence of the entity alongside a specific topic, the stability of nodes in the Knowledge Graph, the volume of citations in certified knowledge bases, and the historical quality of the backlink profile. This data represents the mathematical surrogates of trust. EEAT is the commercial interface through which Google packages complex technical signals.

Understanding this shift transforms your strategy: you don’t need to optimize your site to “appear” trustworthy in the eyes of a human rater, but to provide crawlers with data that the machine interprets as evidence of reliability.

While the goal of traditional search was to rank results in a list, for modern AEO (Answer Engine Optimization) and GEO (Generative Engine Optimization) systems, EEAT serves as the safety filter. They do not have an internal “EEAT algorithm,” but they use similar (and overlapping) criteria like a firewall where authority becomes the technical guarantee that allows the machine to cite you without fear of generating incorrect or dangerous answers. Artificial intelligence obviously doesn’t read Google’s guidelines, but the information retrieval system (RAG) is based on the search engine index and therefore uses them in some way to filter out junk data.

If your brand doesn’t emit verifiable signals of truth, language models classify you as a “hallucination risk.” Are you a responsible entity or just background noise? The answer depends on how you transform your reputation into processable data.

The four cornerstones of the framework

Experience, Expertise, Authoritativeness, Trustworthiness is the reference model that Google has established to guide human judgment regarding the credibility of content.

The context is clear from the outset: Google must distinguish reliable information from inaccurate, manipulative, or potentially harmful content, going beyond the automated analyses of its algorithms. To do so, it has defined a conceptual framework that allows evaluators to analyze four complementary dimensions.

- Experience refers to direct engagement with the subject matter. It is not merely personal storytelling, but evidence of real-world contact with a field: use of a product, professional practice, or concrete experience gained over time. You demonstrate that you have lived through the subject matter through documentary evidence, original photos, and personal perspectives that no artificial intelligence can replicate.

- Expertise indicates the competence demonstrated in the treatment of the subject. This includes thematic depth, terminological accuracy, staying up-to-date, and the ability to address a topic with rigor and consistency. It emerges through the accuracy of data, the use of specialized language, and a deep, vertical mastery of the subject.

- Authoritativeness concerns external recognition and quantifies the reputation of your byline or brand. It is based on accolades, relationships with other relevant entities, and citations and mentions you receive from other reputable sources in your industry. It is the measure by which a source is considered a reference in a given domain.

- Trustworthiness represents the overall level of reliability. It focuses on the accuracy of information, the transparency of your sources, the technical security of the site, and the consistency between what is stated and what is published. It is the parameter that determines whether you can earn users’ trust in the long term.

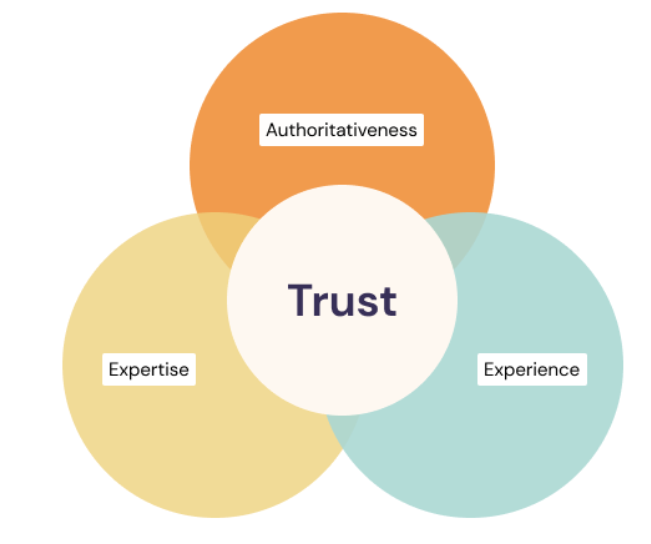

Trust at the Center

The guidelines clarify that Trust is the most significant parameter in the system.

Google has been explicit: without a solid foundation of trust, your signals of expertise and authority are irrelevant. You may be the world’s leading expert on a topic, but if your site conveys signals of poor transparency, technical instability, or conflicts of interest, the algorithmic verdict will be unforgiving. Trust is the ultimate goal; the other pillars are supporting evidence designed to validate it.

The level of Trust required is dynamic and depends on the criticality of the search:

- An e-commerce site establishes trust through HTTPS protocols, certified payment systems, reliable customer service, and transparent return policies.

- A review site undermines its own credibility if it fails to disclose commercial ties or does not demonstrate actual use of the product.

- A YMYL informational page meets the trust threshold only if its claims align with expert consensus and are supported by verified sources.

In this hierarchy, experience provides lived perspective, expertise ensures theoretical accuracy, and authority measures external recognition. However, these three elements serve solely to demonstrate that your entity is trustworthy and associated with a traceable entity.

You must secure your identity before expecting visibility: trust is not earned through words, but through the consistency of the signals sent to crawlers. If this is missing, every effort in content marketing is in vain.

The Birth and Evolution of EEAT

EEAT is an adaptive paradigm and could not even be a static concept: its original purpose was to provide Google’s search quality raters with a framework for judging the usefulness and reliability of a page by initially observing human-centric quality metrics.

And just as the Google algorithm is constantly evolving to ensure the best possible experience and meet our expectations when we search the engine, so too did the paradigm have to adapt to the market, technological progress, and machines’ semantic analysis capabilities.

In the first explicit and official reference to these concepts, for example, the “E” for expertise is missing; it was introduced later: in 2014, in fact, the acronym appearing for the first time in the guidelines for raters is “E-A-T,” standing for Expertise, Authoritativeness, and Trustworthiness. Raters assess quality using these concepts as a reference and train the algorithms’ machine learning systems, which learn to replicate that judgment across billions of pages. Nothing is random: at this stage, Google is addressing a specific problem, because the expansion of online content production renders an evaluation based solely on links and semantic relevance insufficient. A quality standard capable of distinguishing credible sources from weak ones is needed.

The transition from theory to practice took place in August 2018 with what was immediately dubbed (and has gone down in history despite subsequent denials and clarifications from Google) the “Medic Update,” the core update that primarily penalized health and finance websites lacking verified authority—for example, health and wellness pages offering medical advice without the author demonstrating adequate professional qualifications. The fluctuations observed, especially in YMYL sectors, revealed a clear trend: the stability of visibility was beginning to be linked to the systemic perception of reliability.

In December 2022, the acronym welcomed the second E for Experience. This addition reflects the strategic need to prioritize real-world experience over automated text generation—demonstrating theoretical expertise is no longer enough; a direct connection to the subject matter becomes essential. Google shifts the focus to physical action and firsthand knowledge. You must demonstrate that you have tried a product or tested a procedure. Experience represents the only antidote to the proliferation of synthetic content lacking practical verification. The emergence of “Experience” slightly precedes the rise of generative AI technologies and underscores the importance of content created through human interaction with the real world. Google has emphasized this value to highlight the paramount importance of content created by humans, capable of providing added value that a machine, limited to training data, can never produce.

The Transition to Entity Analysis

Let’s revisit an important point that has often been underestimated over the years: Google does not merely index pages, but organizes information around entities.

The Knowledge Graph has made this transition evident since as early as 2012. Since then, the evolution of ranking systems has progressively integrated entity-based signals—relationships between subjects, recurring co-occurrences, citation patterns, and brand recognition as a stable information node.

As long as search remained document-based, the focus of evaluation was the individual page—semantic relevance, structure, links, and behavioral signals. With EEAT, the focus expands: author, brand, organization, subject domain, and external reputation are viewed as information nodes with a history, relationships, citations, and thematic continuity over time. This means that content may be relevant to a query, but the system may assign different weight to the source producing it based on signals accumulated over time.

Raters must analyze who the author is, what the site’s reputation is, what type of expertise is required for that topic, and what independent sources say. This process calibrates models that recognize complex patterns of reliability, in which the system no longer asks only “is this page relevant?”, but “is this entity credible in this context?”.

In our studies of YMYL sectors—such as the analysis dedicated to the health sector—a recurring finding emerges: domains that maintain stable visibility over time demonstrate high thematic consistency and a network of citations from qualified sources. The variable is not only the quality of individual content, but the editorial continuity and the recognizability of the brand as an information source. The same principle also applies to visibility analysis for Google: the weight of the brand—understood as an entity that is searched for, cited, and linked to—increasingly influences the stability of rankings.

With the introduction of AI Overviews and generative systems, this aspect becomes even more evident, because the model must choose which entities to consider reliable even before combining information.

And the EEAT framework governs the difference between document-based ranking and generative information selection, between a system where pages compete and one where sources compete.

The historical evolution and the transition from E-A-T to E-E-A-T

We provide clarity by retracing the history of this protocol, which is also a way to understand its present and try to anticipate the future. Google has been working on these concepts for a long time, and each update has made the filter more stringent.

- 2014 – The Birth of E-A-T

Google introduces the concepts of Expertise, Authoritativeness, and Trustworthiness for the first time in its Search Quality Rater Guidelines. At this stage, E-A-T primarily served human evaluators (Quality Raters) to manually assess the relevance of results and provide feedback to engineers to calibrate the algorithm. For the few in the SEO community who were aware of its existence, E-A-T was viewed as a guideline for editorial quality.

- 2018 – The Medic Update

The importance of E-A-T began to gain traction in the SEO world following the “Medic Update” in August 2018. Google’s message seems clear: on topics that impact people’s lives (YMYL), certified expertise is a ranking requirement. At this stage, therefore, E-A-T functions as a defense for YMYL sites and a filter against misinformation.

- 2022 – Introduction of Experience

In December 2022, Google added the second “E” for Experience. The update addresses the need to prioritize human-generated content on a web that is increasingly filled with automatically generated text. Google clarifies that, in many contexts, direct experience is as valuable as—or even more valuable than—formal expertise. A tax review requires an accountant (Expertise), but a review of business management software requires someone who uses it every day (Experience). This draws the line between derivative information (replicable by machines) and authentic information (based on human experience).

- 2024 and Beyond – The AI Filter

Today, E-E-A-T takes on a new function: it acts as a safety filter for generative responses, even outside of Google. Language models (LLMs) tend to “hallucinate” and invent facts. To mitigate this risk, AI systems, especially in live searches, preferentially draw from sources that exhibit very high E-E-A-T signals.

Why it has caused so much confusion and why, despite everything, it makes sense

The EEAT framework is deliberately broad and, on closer inspection, vague. It is not a metric, it is not an isolated technical factor, it is not a score; there is no measurable “EEAT value” in a report, and there is no single action that can improve the ratings.

It is an interpretive category that summarizes a complex system of evaluations. Until 2018, there was nothing controversial about it; in fact, the concept was mostly ignored and reserved for more technical discussions. The divide emerged when the framework entered the SEO debate, especially after the August 2018 core update, because from that moment on, (E)EAT became the recurring explanation for fluctuations, penalties, and recoveries. Every change in visibility is viewed through that lens.

But EEAT functions as a synthetic container for diverse signals: brand reputation, backlink quality, thematic consistency, editorial stability, author transparency, site technical security, and alignment with recognized sources. It is not a single element. It is an interpretive category that summarizes a complex system of evaluations.

This makes it powerful on a conceptual level and fragile on an operational one.

For years, it has served an almost pedagogical function, offering a common language to discuss quality without delving into the details of algorithmic mechanisms.

The flip side is evident: if everything falls under EEAT, nothing is directly actionable.

Here lies the paradox:

- The framework is declared central.

- There is no numerical indicator.

- There is no specific technical lever.

- The effects often manifest during core updates.

The result is a concept that describes but does not guide.

EEAT became a retrospective diagnosis: if a site grows, it shows signs of quality; if it declines, it likely lacks reliability or authority.

The difficulty was not theoretical. It was practical.

How do you increase authority in a measurable way?

How do you quantify expertise?

What is the required trust threshold for a given sector?

The guidelines offer qualitative criteria. Algorithms operate on distributed signals. Between these two levels lies a space for interpretation that is not always transparent.

Let’s rephrase it: the vagueness is not accidental. Google manages a system that must evaluate billions of pages across extremely diverse thematic contexts. A rigid, measurable framework would risk being easily manipulated, whereas a broad framework allows the evaluation to be adapted to the nature of the query, the sector, and the level of information risk.

The historical EEAT sits within this balance between flexibility and control: not a technical lever, but a conceptual framework that guides the evolution of ranking systems.

EEAT Beyond Google Today

The transformation has been complete. In just over a decade, we’ve moved from feedback on a page’s quality and its ability to address a search intent to an evaluation of the data’s origin and traceability. The integration of the second E—Experience—has drawn the line between derivative information, mass-produced by machines, and authentic information rooted in human experience. Whereas before you had to convince a rater of a text’s quality, today you must also address all the signals that definitively demonstrate this quality.

This is all the more true with the web saturated by content generated by artificial intelligence, which shifts the value of information to its non-replicability. A language model can accurately synthesize the theory behind a topic, but it cannot simulate a lived experience without falling into hallucination. Google rewards those who demonstrate that they have physically or professionally interacted with the subject of the discussion because experience is the only proprietary data that AI cannot clone.

But in this way, EEAT has also “moved beyond” Google to become a concept applicable to AI systems that use RAG to select the sources worthy of informing synthetic responses: first and foremost, most Answer Engines use Google’s index (where the EEAT filter operates natively, so to speak).

But, above all, AI engines also face the same problem as traditional search engines: reducing the risk of contaminating the answer with unverified data. They don’t need ten thousand results for a query; just a few, but indisputable ones, are enough. And so they apply a preventive filtering process, analyzing whether your entity is cited in authoritative contexts, whether the author has a consistent publishing history, and whether there is documentary evidence of their work over time. In short, they use EEAT criteria automatically, and here too, providing the machine with that practical detail, that metric, or that insight that only a human expert can possess can make all the difference.

In the past, you worked to “please” Google to avoid traffic drops during core updates (and authority was mainly tied to backlinks, an external and manipulable signal); today you also work to provide machines with the credentials necessary to be extracted and cited. If your signature doesn’t emit signals of truth, you remain invisible not only in traditional SERPs but also in the summarization processes of digital assistants and answer engines.

Translating EEAT into operational architecture: on-page interventions

EEAT makes sense today if you stop viewing it as an abstract editorial parameter and treat it as a technical governance protocol.

Algorithmic invisibility is combated through data certification: your domain must become an archive of certified evidence, where every node acts as documentary proof capable of withstanding the scrutiny of re-ranking systems.

The first step is to split your communication strategy: use metadata to validate the source’s identity and secure that identity in the Knowledge Graph, while simultaneously refining the visible structure to facilitate the extraction of facts by answer engines.

Transparency becomes the technical requirement that can eliminate the ambiguities and anonymity that generative engines interpret as systematic unreliability and lead to preventive exclusion from information synthesis processes. Every single signal you disseminate online acts as a certification of origin and helps make your brand a responsible entity that provides structural evidence of its expertise.

Building the Author Node and Corporate Transparency

Your visibility depends on your ability to make the responsibility behind every published line tangible. Before training machines through code, build the infrastructure of on-page trust: author pages, corporate references, and editorial policies constitute the anchor points that allow search systems to recognize you as a reliable entity.

Transform the byline at the bottom of an article into a microsite of expertise. The bare minimum that many overlook—the author’s biography—is now the primary tool for demonstrating your actual experience: include explicit references to your professional background, links to external publications, and connections to profiles where the author practices their profession. Provide the necessary depth of information to distinguish the human expert from generic summaries—for example, enrich this section with details on years of experience or projects managed. This allows the search engine to close the entity loop, verifying that the speaker actually possesses the credentials to do so.

Apply the same validation logic to corporate transparency. Manage pages such as “About Us,” “Team,” and “Contact” to make the organization behind the content tangible. Including the physical office address, customer support contact information, and editorial policies conveys a signal of stability that algorithms interpret as a guarantee of reliability. Date management is also a signal of accuracy: including both the publication date and the date of the last update tells the search engine that the content is monitored and reflects the current consensus of the industry. A site that hides the identity of its managers or publishes information without clear time references is systematically perceived as a risk.

Visual evidence and personal analysis to generate Information Gain

With the evolution of generative systems, a further level has emerged: Information Gain, a concept also present in Google patents that concerns a piece of content’s ability to add informational value beyond what is already available in the index. In other words: consistency alone isn’t enough; you must contribute distinctive and original elements.

An entity that publishes repetitive material consolidates its presence, but does not necessarily strengthen its authority. Experience and expertise matter; they become your barriers against mass automation. You produce information gain by adding data, details, and perspectives to the index that arise exclusively from your direct contact with the subject matter and shift the value toward evidence of lived experience. Original images, case studies, and personal analyses are unique details that artificial intelligence cannot replicate, as it is limited to reworking pre-existing knowledge.

Generate new knowledge through action, providing the evidence needed to distinguish your authentic source from the background noise of synthetic content. This tangible manifestation of experience allows response systems to select you as a reliable source: the machine needs your proprietary data to avoid errors in synthesis, and you provide it in the form of irrefutable documentary evidence.

Technical validation and entity disambiguation via schema markup

After building this on-page evidence, implement Schema.org markup to secure communication with the knowledge graph.

Structured data represents the language through which you communicate directly with Google’s “brain”; it is the infrastructure that reduces ambiguity and allows the search engine to map your entity with surgical precision. If Google must choose between two sources, it will select the one that provides explicit clues about its identity and the relationships between authors and content. As the late Bill Slawski argued, search engines lack human common sense: they require precision.

“When you have a person who is the subject of a page and shares a name with someone else, you can use a SameAs property and link to a page about them in a knowledge base like Wikipedia.” In this way, we can “clarify that when you refer to Michael Jackson, you mean the King of Pop, and not the former U.S. Director of National Security”—obviously very different people. Furthermore, “companies or brands sometimes have names they might share with others,” as in the case of the band Boston, “which shares a name with a city.”

There are specific schema types that act as certificates of authenticity for your organization and for those who write for you.

- Person (Person schema). EEAT starts with the individual. The first thing Google looks for is the authority of the content creator. Don’t just include the author’s name: use the Person schema to list their occupation (hasOccupation), honorific titles (honorificSuffix), and professional affiliations. The key property is sameAs: use it to link the author to their authoritative external profiles (LinkedIn, Wikipedia, professional registries). This allows Google to distinguish your author from namesakes and recognize them as an expert and verified entity.

- Organization (Schema Organization). This markup defines the site’s publisher. You must provide Google with your company’s exact details: physical location (address), direct contact information, date of establishment, and, most importantly, awards received (award). An organization that openly declares its structure is, by algorithmic definition, more reliable than an anonymous brand. For sites covering sensitive topics, enriching this markup with the founder and foundingDate properties adds a layer of historical context that the algorithm interprets as a signal of stability and reliability over time.

- Author (Author Property). Within the Article or NewsArticle schema, the author property must point directly to the contributor’s Person schema. This creates an indissoluble link between the value of the article and the authority of its author. If you publish on behalf of the brand, list the author as Organization, but remember that by 2026, the human signature will carry increasing weight in the Experience balance.

- This property is one of the most powerful tools for YMYL sectors. If your author does not have a dominant online presence, but the content has been reviewed by a well-known medical, legal, or technical consultant, use it to state this. Including the reviewer’s name in the schema tells Google that the piece’s accuracy has been validated by a third-party professional, instantly boosting the page’s trustworthiness.

- Citing sources. Demonstrate that your work is built on a solid foundation. Don’t just link to random sources: cite scientific studies, laws, or institutional data within the text. This methodological rigor allows analysis systems to place your brand within a digital ecosystem of excellence. You can reinforce this signal by using the citation property in Schema markup to explicitly indicate primary sources, but remember: it is the presence of verifiable and authoritative references within the content that tips the scales of trust.

Pay attention to data nesting to clearly describe the relationships between subjects. Clean, hierarchical code eliminates misunderstandings by crawlers and facilitates the extraction of verifiable facts, ensuring a competitive advantage in new conversational search interfaces.

Design content that stands up to Answer Engine queries

Appearing in a generative answer means the algorithm has bet on your truth; if you lack proof, you remain an orphaned piece of information destined for oblivion. Pursue brand citations and ensure thematic consistency so that your brand is associated with a specific, unambiguous scope of topics. If you have a rock-solid EEAT and send clear signals, you enter the AI Overview’s answer stream; otherwise, at best, you remain confined to the index of old blue links.

Don’t overlook basic actions that simplify communication with AI systems that perform RAG: artificial intelligence extracts information accurately when you present it in tables, lists, and direct comparisons.

A table that compares metrics or lists technical specifications provides solid evidence that reduces the risk of hallucination, making the data immediately “samplable” by answer engines. Design content that stands up to scrutiny by applying the principle of testability or verifiability: you must transform content creation into a practice of certifying reality, where every detail serves to demonstrate to search systems that what you claim is supported by real, verifiable knowledge.

In other words, include concrete evidence, proprietary data, and documented case studies in the text that act as witnesses in a reputational process.

- Source identifiability: the author is a traceable entity with a consistent reputation outside the site.

- Documentary support: statements are supported by references to reputable sources and links indicating thematic relevance.

- Absence of manipulation: the page’s objective is clearly to help the user, not exclusively geared toward aggressive monetization.

- Multi-channel consistency: the brand’s authority is confirmed on third-party platforms that validate its stated expertise.

Citable content features documented originality that provides an informational delta compared to the basic summary produced by language models. If your page lacks this evidence, it remains classified as “orphan information”—a risk that re-ranking systems cannot afford to take.

Overcoming selection filters today requires transforming content creation into a practice of certifying reality, where every detail serves to demonstrate that what you claim is supported by real, verifiable knowledge.

External Reputation Governance

After consolidating the signals within your site, you must necessarily move on to optimizing what is said about your brand across the rest of the Web. It is also a logical step: search systems and response models cannot rely solely on what you claim about yourself, because authority is the result of a network of confirmations that develops beyond your direct control.

It is much more than just link building: it is the construction of the infrastructure of your algorithmic credibility. While you build the evidence on your site, across the rest of the web you must gather testimonials of your ability to occupy real conversational spaces. Every citation, mention, or reference to your brand in relevant contexts acts as a vote of confidence that search engines use to validate your position in the knowledge graph. Your mission is to transform the brand into a stable node in the digital neighborhood, ensuring that signals of reliability are consistent, persistent, and, above all, come from sources that themselves possess a high EEAT.

Digital PR as proof of algorithmic consensus

Mentions of your brand and your authors in industry publications, professional forums, and institutional databases constitute the ultimate proof of your thematic relevance. Manage your external presence through a targeted Digital PR strategy, ensuring that your brand name is systematically associated with the keywords that define your specific expertise. These co-occurrences allow algorithms to map your entity within a clear semantic framework: if the web consistently cites you as a reference in a given field, your authority becomes an established fact.

Don’t chase quantity, but rather relational density.

A single mention in an academic publication or an editorial in an authoritative publication carries more weight than a thousand references on sites lacking a clear editorial identity. You work to become the natural reference point in your field, placing your signature where expert consensus validates the quality of the information.

The backlink profile as a graph of relationships between entities

Backlinks still represent the most powerful system for transmitting trust, provided you view them as connections between entities rather than mere traffic vectors. Every inbound link from an authoritative site transfers a measure of reliability that fortifies your ranking, especially for searches requiring a high degree of certainty.

Manage your backlink profile rigorously, eliminating noise from anonymous domains that could dilute your perception of trustworthiness.

Your authority is reflected in the mirror of your digital neighbors: if you operate in sensitive sectors, for example, a link from a government portal, university, or trade association certifies your suitability to address complex topics. Interconnection with industry leaders allows you to be recognized as part of the global information elite.

The convergence of social media and search

Search has changed, and we can now speak of social search: Google constantly monitors (and integrates into its results) signals published on social networks to map a brand’s relevance and its ability to generate authentic interest.

Being mentioned, discussed, and searched for on social platforms provides the search engine with confirmation that your entity is active and influential. Master this integration by understanding that information search has shifted toward visual and immediate formats: if your brand is absent from the social contexts where users seek answers, your overall authority is weakened.

Consistency in the narrative between your website and social media fortifies your brand, making it a stable hub that search engines cannot ignore. The weight of experience, in particular, finds its greatest validation on platforms like Reddit and Quora, which have become primary clusters of trust because they host human, unfiltered discussions verified by the community. When your brand or your experts are cited as problem-solvers in these contexts, you receive a signal of authority that no artificial backlink can replicate.

Your goal here is to become part of the solution. Response systems draw heavily from these knowledge bases to extract opinions and verified facts: being present and validated in these discussions transforms your brand into a primary, citable source, granting you access to that digital community of excellence that defines industry leaders.

Brand Monitoring and Algorithmic Sentiment

Algorithmic reputation integrates human perception transformed into trackable data. Evaluation systems constantly analyze the sentiment associated with your brand on independent platforms, reviews, and professional communities.

Multichannel reputation is also fueled by user feedback and a presence on certified business directories such as the Google Business Profile (formerly Google My Business). External reviews and discussions on independent platforms form the social proof that validates your reliability in the eyes of machines.

Whether you run a local business or an e-commerce site, the completeness of information on these platforms and active comment management become signals of vitality and professionalism. Ignoring your external digital footprint means letting chance (or, worse, competitors!) define your brand’s authority. Instead, you must actively encourage the generation of positive signals off-site, ensuring that every trace left on the web helps fortify your reputational firewall.

Negative sentiment or a total absence of genuine feedback are, in fact, red flags that search engines interpret as a risk to the end user. You can manage this dynamic by encouraging the generation of authentic comments and actively participating in contexts where your expertise can solve real problems. Trust is built through the consistency of your responses across time and the digital space. A brand mentioned positively by users is one that search results prioritize, guaranteeing you a stability that no purely technical optimization could sustain on its own.

10 practical truths to debunk misconceptions about EEAT

The confusion surrounding this topic harms your strategy because it pushes you to invest in superficial optimizations that don’t tip the scales. You must learn to distinguish real algorithmic signals from “narrative SEO.”

To understand EEAT, you must accept that quality is not a subjective value, but a technical metric derived through thousands of small evaluation processes.

- EEAT is not a single algorithm

Stop looking for “the EEAT algorithm.” It doesn’t exist. It is a collection of millions of micro-signals working in unison to assess your credibility. Many of these micro-algorithms look for specific evidence that we conceptualize under the framework’s label, but they operate pervasively on every single query. You control visibility only when you accept that authority is a pervasive property of your entire domain, not a toggleable feature.

- The myth of the internal score

Google does not assign your site a score from 1 to 100 for EEAT. You will never find an official metric in Search Console that certifies your level of trustworthiness. However, the response systems operate on selection thresholds: if your entity does not exceed the minimum reliability threshold required for a given topic, you remain invisible.

- A prerequisite for existence, not a direct ranking factor

Treat authority as a quality indicator that influences rankings indirectly but comprehensively. If you don’t meet the trust threshold required for a topic, traditional ranking signals—such as keyword usage in the Title—become irrelevant. In critical sectors, EEAT is your pass: if it’s missing, you don’t earn the right to compete for AI Overviews.

- The Universality of Requirements in the AI Era

Stop thinking that EEAT applies only to doctors or lawyers. With the explosion of synthetic content, every entity must demonstrate its own Experience. Even a hobby website must prove it has “firsthand experience” with what it describes to generate Information Gain. The expected standard depends on the risk to the user, but today, proof of lived experience is the universal requirement to stand out from the noise generated by machines. As explained by Lily Ray, one of the leading experts in this field, it’s helpful to include phrases like “During my test…”, “I tried this product…” or “When I arrived at the hotel…”. Language that reflects a lived experience conveys trust to both Google and readers. Artificial intelligence cannot have real-world experiences, so think about how to make your content visibly human and make it clear that you are a real person, basing your ideas on concrete experiences.

- Technical SEO as the Foundation of Trustworthiness

Optimizing the perception of expertise is useless if your site has crawling issues or insecure infrastructure. Trustworthiness is holistic: your byline may belong to a world-renowned expert, but if the page takes ten seconds to load, your expertise remains invisible. The machine doesn’t trust those who don’t guarantee a smooth user experience and secure data transmission.

- The Author Box is not a miracle solution

Including a biography is merely decorative if the author is not a recognized entity in the Knowledge Graph. Google looks for confirmation beyond your own boundaries. You must work on the author’s identity as an autonomous entity: their byline must carry real weight in communities, professional databases, and third-party citations to be considered a signal of authenticity.

- Core Updates Don’t Just Affect Authority

Stop attributing every traffic drop after an update solely to EEAT issues. Google also evaluates architecture, ad density, and the actual utility of the content. Analyze drops with technical rigor, knowing that authority is just one of the components determining your stability in the index.

- The Mention Is the New Backlink

In the era of GEO, pursue brand mentions rather than simple links. The mention of your brand in authoritative contexts, even without a hyperlink, is processed as a signal of authority. Your mission is to transform the brand into a fundamental node of knowledge that AIs cannot afford to ignore.

- The end of speculative originality in YMYL sectors

In high-risk sectors, originality for its own sake is a danger. Your authority is measured by your consistency with the expert consensus. You must demonstrate that you belong to a cluster of reliable sources, backing every claim with documented evidence. User protection drives the algorithm: your creativity must yield to documented accuracy.

- The time factor and signal persistence

Trust isn’t optimized in a single work session; it’s built through the constant repetition of positive signals. If your brand suffers a ranking drop due to weak EEAT signals, recovery requires months of editorial and technical consistency. There are no shortcuts to rebuilding a reputational firewall once it’s been breached.

EEAT in YMYL sectors: managing algorithmic risk

The rigor with which Google applies the EEAT framework is not uniform but follows a logical, risk-based scale. The trust threshold required for a query related to “choosing a blood pressure medication” is radically different from that needed for a search on “how to clean sneakers.” In the first case, the algorithm demands documented evidence of expertise and an institutional reputation bordering on infallibility. In the second case, the direct experience of an enthusiast is sufficient to satisfy the search intent.

This is also a matter of logic: there are areas of knowledge where incorrect information does not merely result in a poor user experience, but in concrete physical, economic, or social harm. Fields such as medicine, finance, legal advice, current news, and safety therefore fall under what Google has “categorized” with the expression Your Money or Your Life or YMYL, which serves as the cornerstone of information safety doctrine.

Here, EEAT acts as a technical access requirement and market regulator to instantly downgrade dubious sources and protect the reader’s safety. If your page lacks a verifiable professional signature or documentary evidence, algorithmic safety protocols will downgrade you and remove your visibility. And AI acts similarly—we saw this in the analysis of the healthcare sector in AI Overview: answer engines tend to prefer linking to institutional and highly reliable sources rather than risking an inaccurate citation from a domain lacking absolute stability.

Embrace the burden of certified editorial responsibility, knowing that for search systems, reliability is a non-negotiable prerequisite, and work to position your brand within acluster of secure sources, reducing speculative originality in favor of documented accuracy.

Brand Governance in a YMYL Context

User protection guides every algorithmic decision. Google selects the source that offers the greatest guarantees of safety, demoting content that could compromise the financial stability or well-being of readers—or, as in the latest guideline updates, of an entire community and society as a whole.

Today there is an additional layer, because the consolidation of generative search has raised the access threshold even higher. Your visibility strategy must therefore shift from climbing the SERPs to building an authorial signature that acts as a shield against information hallucination.

The way you demonstrate EEAT varies depending on your site’s industry, and you must adapt the signals of reliability to your specific context. Expertise in the culinary field is based on different factors than those required for a court reporter or a financial advisor. Trust in a review site is strengthened through transparency regarding testing methodologies, while a medical column must be managed by an author with verified scientific expertise.

If you cover medical, legal, or tax topics, as mentioned, you are operating in a minefield where the accuracy of information is the only safeguard for your visibility. For high-impact topics, in particular, validation occurs through expert consensus: quality raters (and, by extension, Google) analyze whether the statements on the page align with established scientific or professional truths. If the content deviates from the general consensus without providing extraordinary evidence, it is classified as unreliable. Algorithmic trust thus becomes a metric of compliance: the site must demonstrate that it belongs to a set of safe sources, prioritizing documented accuracy over speculative originality.

Generally speaking, your goal must therefore be to explicitly demonstrate to readers and algorithms the expertise and reliability of the author, ensuring that every claim is supported by evidence or by expert consensus. Designing authority here means mapping the user’s implicit questions and answering them with a level of technical detail that goes beyond a generic summary. Every statement must be “verifiable,” meaning supported by a structure of data and references that allows the machine to validate the information in real time. User protection guides every choice the algorithm makes—and therefore yours as well.

Be careful, though: as we’ve seen, the validation of a YMYL entity no longer takes place exclusively within your proprietary domain, because Google and language models use external reputation as a truth anchor to verify brand-related statements. And so you cannot and must not underestimate the importance of monitoring every external signal, which together form a network of evidence that confirms or refutes the authority declared on-page.

Practice brand governance by monitoring independent reviews on third-party platforms, discussions on niche forums like Reddit or Quora, and the author’s presence in accredited professional databases. A brand’s presence in articles from authoritative publications or citations in industry publications acts as an algorithmic validation system, even in the absence of a direct hyperlink.

Multichannel reputation is the documentary evidence that allows the brand to pass the YMYL filter and be selected as a primary source by generative search systems, which view external consensus as a safeguard against information hallucination.

Use SEOZoom for data-driven validation of EEAT

If EEAT is a reflection of your reputation, you must use data to map your semantic distance from industry leaders and identify the gaps in expertise that undermine your reliability. In SEOZoom, authority is a trackable metric that allows you to understand whether your brand is gaining the stability needed to be cited by response engines or if it is still speaking into the void.

Use the platform to fortify your technical reputation for both Google and AI engines, verifying how algorithms and generative engines frame your project. Shift from emotional brand management to evidence-based governance: by measuring your entity’s strength and its citability, you decide where to focus your efforts to scale the barriers to generative visibility.

- Zoom Authority as a measure of reliability

ZA is the metric that best approximates the trust Google places in your domain. Unlike metrics based exclusively on links, ZA analyzes the quality of actual organic rankings through factors such as ranking stability (Zoom Stability) and, above all, the trust Google demonstrates toward the site by rewarding it in the rankings (Zoom Trust). A site with rock-solid EEAT demonstrates a strong and growing ZA, because the search engine consistently ranks its pages. Monitor this value during Core Updates: if your ZA holds steady or grows while the industry declines, it means your entity has been validated as a reliable source. Conversely, sharp fluctuations indicate trust or quality issues that you must resolve as a priority to protect your visibility.

- Topical Zoom Authority for Vertical Expertise

Demonstrate your Expertise through Topical Zoom Authority (TZA), the metric that indicates how relevant your site is within a specific product category or topic. Guide your vertical strategy by monitoring TZA in clusters that are strategic for your business: being a category leader in this metric means Google recognizes you as an undisputed thematic authority. If your TZA is lower than that of your direct competitors, you have a knowledge gap to fill by producing richer, more specialized content capable of covering the entire semantic scope of the topic.

- The AI Editorial Assistant for Content Verifiability

Creating content that withstands the scrutiny of re-ranking systems requires pinpoint precision. Use the Editorial Assistant to ensure that every piece of your text meets the density requirements of search engines, starting with an analysis of the top 20 results already ranked in SERPs to provide you with an actionable brief based on real data. This way, you optimize for both readers and Google: you understand the main topics, identify concepts related to search intent, and the technical details needed to solidify your expertise and cover every nuance of the user’s query—preventing competitors from filling information gaps you leave uncovered. And with AI Engine, which is integrated into the production phase here, you can check in real time whether you’re also writing for AI, verifying if your text satisfies the identified buyer personas and “appeals” to generative systems. The predictive analysis engine, which simulates the judgment of systems like Gemini or ChatGPT, allows you to increase the likelihood that your content will be chosen as an authoritative source. In this way, content creation ceases to be merely a writing exercise and becomes a process of data validation, ensuring that every page is ready to rank on Google, in AI Overview, and in AI responses.

- Digital Neighborhood Validation and SEO for AI

Authority is also measured by comparison within your digital neighborhood. Use the Competitor Analysis tools to map who Google considers your peers in the SERPs. If your neighbors are institutions, historic brands, or authoritative publications, your entity has gained implicit validation—and when there’s a gap in authority metrics, use the data as a benchmark to understand where your network of supporting evidence is still too weak.

Through the SEO for AI section, you can explore the new frontier of visibility: with AEO Audit and GEO Audit, you can directly analyze your brand’s reputation as perceived by artificial intelligence. With GEO Audit, you query the historical memory of LLMs to verify what they have learned about your site (and what “opinion” they hold of it); with AEO Audit, you analyze the brand as a whole as it appears in live searches. Together, these two tools allow you to understand whether you are recognized as a trustworthy entity or if the machine perceives you with errors or hallucinations.

Compliance Protocol: Secure Your Authority in Seven Steps

You can only turn the EEAT theory into a competitive advantage when you stop viewing it as an editorial goal and start managing it as a technical compliance protocol. Your mission is to eliminate any ambiguity regarding your identity and the unique value you offer compared to a summary generated by artificial intelligence. Authority requires constant monitoring: every touchpoint between your brand and the web must emit a signal of verifiable truth.

Apply this strategic checklist to assess the status of your digital presence. If even one of these elements is missing, your door to algorithmic trust remains ajar, leaving your entity vulnerable to the doubts of evaluation systems.

- On-page entity validation

Your About Us page must stop being generic marketing text and become a document of factual validation. Include physical addresses, tax information, real photos of the team, and an editorial mission statement that clearly defines your responsibility to the user. Prove your operational existence by eliminating the anonymity that search engines interpret as a signal of potential unreliability.

- Structured Data Infrastructure

Use Schema.org markup to make your authority understandable to machines. Implement Person and Organization markup by linking your authors to authoritative external sources (LinkedIn, Wikipedia, professional registries) via the sameAs property. This data web allows the search engine to close the identity loop: it knows who you are, understands your professional background, and recognizes you as the content owner.

- Verification of Information Gain

Every new piece of content must add original data, graphics, or perspectives not present in the training datasets of language models. If your article merely summarizes the top results of the SERP, you provide no reason for response systems to cite you. You create value when you include previously unpublished details derived from real-world experience, transforming writing into a practice of data certification.

- Verification of Accountability in YMYL Sectors

For topics related to health, safety, or finance, explicitly state who wrote the piece and who reviewed it from a scientific or technical perspective. The presence of a byline with certified and verifiable credentials is the only ticket to visibility in these fields. Include explicit references to the authors’ professional titles to reinforce the page’s trustworthiness.

- Editorial transparency and factual freshness

Explicitly state the date of the last update and cite the primary sources used to validate your claims. Consistency of data over time is a sign of accuracy: updating obsolete content signals to search engines that your resource is actively maintained and reflects the current consensus of the industry. Cite external sources, indicating their specific authority, to reinforce your methodological rigor.

- Off-page recognition and consensus

Monitor how frequently your brand is mentioned in authoritative contexts or industry discussions without having to solicit every single mention. Multichannel reputation on platforms like Reddit, Quora, or vertical forums is the documentary evidence that allows the brand to bypass AI security filters. You’re working to become a key knowledge hub that machines cannot ignore.

- Zoom Authority Monitoring

Use Zoom Authority and Topical Zoom Authority as definitive KPIs to assess the health of your algorithmic reputation. A solid ZA and a high industry-specific TZA indicate that Google recognizes your entity as a reliable and authoritative source. If these metrics remain stable after a system update, it means your trust core is intact and your EEAT governance is producing tangible results.