Google autocomplete, how the prediction system works

It is a mechanism that we see in operation every day, many times a day: we open the Google page and, right when the first letter is inserted in the search box, here goes some search suggestions through the automatic completion predictions. Danny Sullivan, Public Liaison for Search, spoke about this system and its operation, deepening all the questions and the main doubts that users have about it.

How Google autocomplete works

The post, published on Google’s blog, aims to clarify various aspects of the autocomplete, and in particular how auto-completion predictions are automatically generated based on actual searches, as this feature helps the user to complete typing the query that he already had in mind, but also because not all predictions are useful and what Google does in such cases.

First of all, Sullivan briefly describes the system: “You come to Google with an idea of what you would like to look for; as soon as you start typing, the forecasts are displayed in the search box to help you complete what you’re typing,” he writes. These predictions, “which save time, come from a feature called Autocomplete”.

Where predictions come from

Overall, auto-completion is “a complex feature that saves time and does not just show the most common queries on a given topic”. This is why it differs and should not be compared with Google Trends, which instead is a tool for anyone interested in researching the popularity of searches and search topics over time.

Auto-completion predictions still reflect searches that have been performed on Google, says the author. To determine which predictions to show, “our systems begin by examining common and trending queries that match what someone starts to enter in the search box”, bringing some explanatory examples.

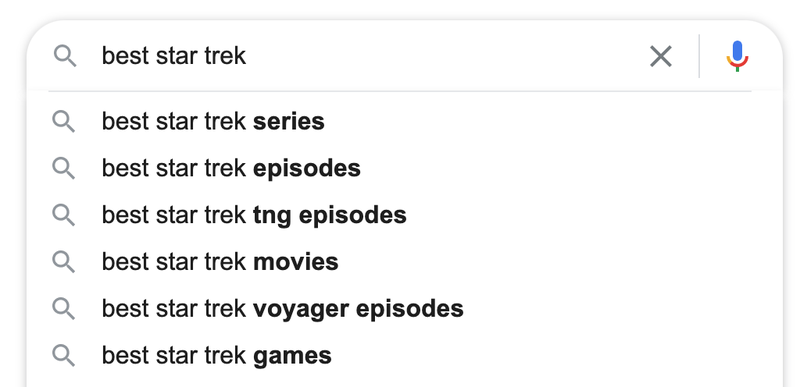

If the user were to type “best star trek …” (one of Sullivan’s great passions), among the common completions that will follow there could be “best star trek series” or “best star trek episodes”.

Answers adapted on the user

This, however, is only the most basic level of predictions, involving many more aspects and not only the most common answers in general.

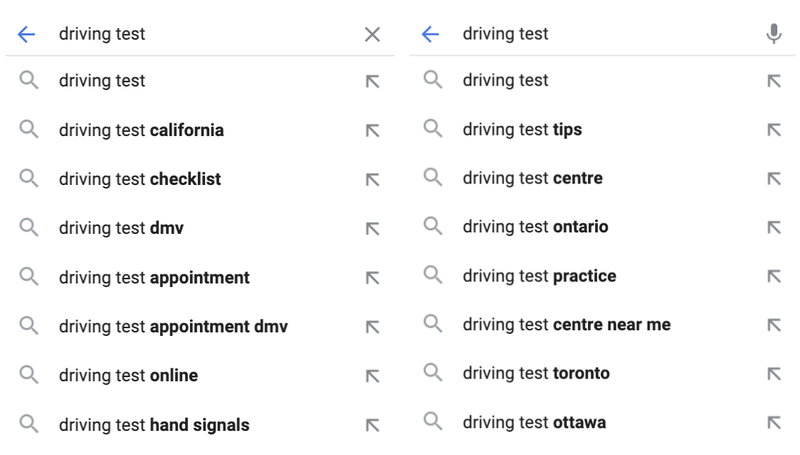

Google also considers factors such as the language of the researcher or the location from which it is searching, because they make predictions “much more relevant”. In the next picture you can see the difference between automatic predictions generated by driving test research in the American state of California compared to the Canadian province of Ontario.

The suggested queries differ in the definition of physical places in the vicinity, in the most frequent expressions but also in spelling: for instance, in the Canadian version is proposed the term “centre” – correct for this language – instead of “center” which is customary in American spelling.

Suggestions on long queries

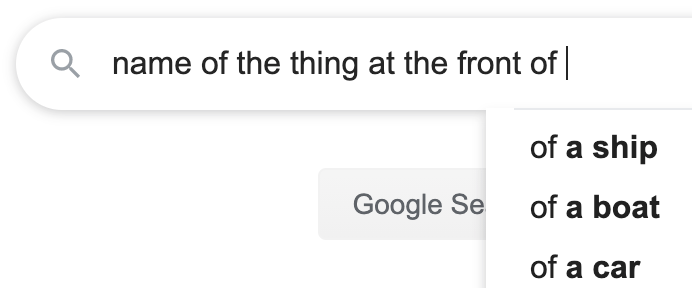

In order to provide better predictions for long queries, Google systems can “automatically switch from predicting an entire search to parts of a search”.

For instance, there might not be many queries for “the name of the thing at the front” of any particular object, but there are recorded many queries for “the front of a ship” or “the front of a boat” or “the front of a car”, and so Google is able to offer these predictions compared to the end of what someone is typing.

Freshness in Google Autocomplete

As in the Search – see the case of the orange sky that we also covered on our blog – Google takes into account the freshness even when viewing the forecasts. If automated systems detect “that there is an increasing interest in a topic, they may show a trend prediction even though it is generally not the most common of all the related predictions we are aware of”, Sullivan explains.

For example, he continues, “the search for a basketball team is probably more common than the individual games; however, if that team has just won a big match against a rival, Timely predictions of the meeting could be more useful for those looking for relevant information at that time”.

Other factors influencing autocomplete

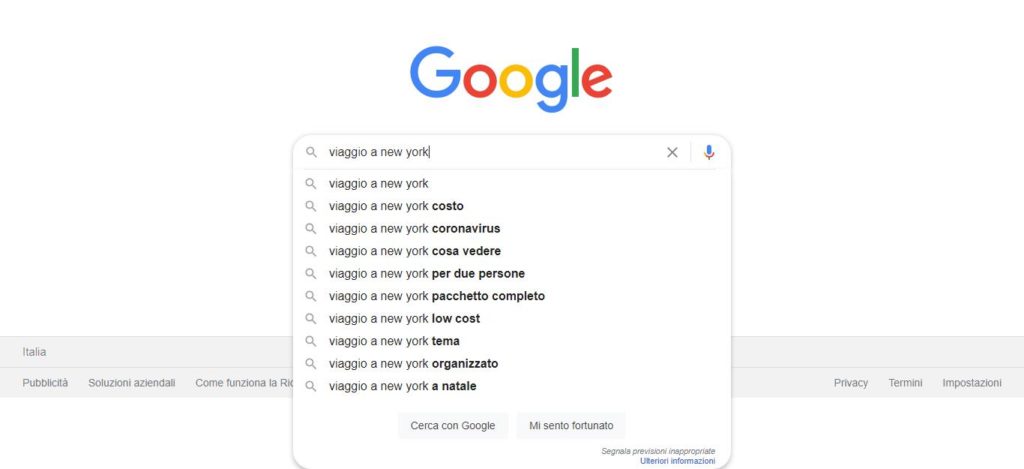

Again, predictions also vary depending on the specific topic someone is looking for, as “people, places and things all have different attributes that people are interested in”.

For instance, someone looking for “trip to New York” might see a forecast of “trip to New York for Christmas”, since this is (usually) a popular time to visit that city (and you see it also in our example image). On the contrary, “trip to San Francisco” could show a forecast of “trip to San Francisco and Yosemite”.

Although this two topics seem to be similar or fall into similar categories, we will not see “always the same predictions if we try to compare them”, since “predictions will reflect unique queries pertinent to a particular topic”.

Predictions we will probably never see

Ultimately, then, the predictions “are thought to be useful ways to complete something you were going to type faster”, but they are not perfect and there is a possibility that they appear “unexpected or shocking queries”; moreover, “it is also possible that people take predictions as statements of facts or opinions”, as well as Google is aware “that some queries are less likely to lead to reliable content”.

Google treats these potential problems in two ways. First of all, it uses “systems designed to prevent the display of potentially unnecessary predictions and that violate the rules”, and in “Second, if our automated systems don’t detect predictions that violate our policies, we have enforcement teams that remove predictions”.

Content removed by Autocomplete

Specifically, Google’s systems “are designed to recognize terms and phrases that could be violent, sexually explicit, hateful, disparaging or dangerous”: when they recognize “that such content might emerge in a particular prediction, our systems prevent its display“.

Obviously people can still search for such arguments using those terms, nothing prevents it, but Google wants to avoid “unintentionally shocking or surprising people with predictions that they could not have expected”.

Thanks to automated systems, Google can also “recognize if it is unlikely that a forecast returns very reliable content“. For example, “after an important news event, there may be any number of unconfirmed entries or dissemination of information”, and Google wants to prevent people from thinking” that auto-completion is somehow confirming such rumors“.

In such cases, “our systems identify whether there is likely to be reliable content on a particular topic for a particular search; if that probability is low, systems may automatically prevent the display of a forecast”. But, again, this does not prevent anyone from completing a search alone, if they so wish.

Norms for autocomplete predictions

Generally, Google’s automated systems work well “but they can’t detect everything”: for this, “rules for automatic completion” of public online access have been established.

The goal is obviously to prevent the display of forecasts that violate the rules, but if “such predictions exceed our systems and we learn about them (for example through public reporting options), our application teams work to examine and remove them, as appropriate”. In such cases, Google removes “the specific prediction in question and we often use pattern-matching and other methods to capture closely related variations”.

As an example of the practical operation of the system “consider our name policy in auto-completion, started in 2016, designed to prevent the display of offensive, harmful or inappropriate queries in relation to named persons, so that users do not make an impression on others solely on the basis of forecasts”. There are therefore systems that aim to prevent the display of this kind of predictions for name queries, but if the violations passed for some reason “we remove them in line with our policies”.

It is still possibile to look for what we want

Once completed the overview of the reasons that push Google not to show some possibilities of autocomplete, Sullivan remembers however that “the predictions are not search results“. Sometimes, “people worried about predictions for a given query might suggest that we are preventing the display of actual search results”, but that is not the case here: “Auto-completion criteria only apply to predictions and do not apply to search results”.

The Googler admits that there is a possibility that “our protection systems could prevent the display of some useful predictions” and that the approach adopted is very strict and cautious, especially “when it comes to names”so much so that it could also prevent the display of forecasts that do not violate the rules. However, concludes Danny Sullivan, “we believe it is better to adopt this cautious approach because even if a forecast is not displayed, this does not affect the possibility for someone to finish typing a query alone and find the search results“.

Cover image from: blog.google