Useful content begins where the keyword ends

For years, we’ve treated search as a question to be intercepted and a query to be monitored. Prompts have shattered this oversimplification. When a user queries a generative engine, they expect a complex solution; their request already encompasses comparison, doubt, context of use, a need for reassurance, selection criteria, and follow-up questions.

You can’t continue to analyze all of this solely through the lens of traditional keyword research, because artificial intelligence breaks down the original prompt into dozens of parallel micro-searches to explore every nuance of the expressed need, and if you ignore this step, you leave a crucial part of source selection uncovered.

AI Prompt Research addresses this gap. It takes a written question—just as a user would enter it into an AI engine—and breaks it down into its actual architecture: information domains, intents, decision points, follow-ups, and editorial directions. It makes this algorithmic “behind-the-scenes” process readable and allows you to oversee the entire range of queries that the AI triggers across the web to construct the final answer.

The user’s question is already a mini-information architecture

A traditional query tends to compress the user’s question into its shortest form—more schematic, more “indexable.” A prompt, on the other hand, expands it and contains more intentions than are visible on the surface. It incorporates context, motivation, scenario, expectation, perceived risk, level of expertise, and often a very clear practical goal.

When you ask Gemini or ChatGPT Which city to visit in Italy in April?, Which cordless vacuum cleaner is best to buy for a home with pets?, or How to choose a sunscreen for children with sensitive skin?, you don’t expect a single answer. You are opening up a structure. Within it coexist at least five levels: basic information, comparison of alternatives, verification of reliability, a nudge toward a decision, and needs that arise immediately after the choice.

Your prompt is already a mini-information architecture. The visible topic is just the top layer; beneath it lie practical concerns, evaluation criteria, fears of making mistakes, requests for confirmation, and follow-up issues. It is this layering that AI engines capture better than traditional search, because they process queries formulated in natural language that are already rich in context.

A keyword captures a topic; a prompt reconstructs a problem

The keyword remains useful for measuring, classifying, and guiding. The prompt adds depth because it returns the expanded form of the question. A user asking which vacuum cleaner to buy for a home with pets isn’t just looking for a product. They’re looking for a solution compatible with pet hair, maintenance, noise levels, accessories, battery life, value for money, and long-term management. The query may stop at the surface of the topic; the prompt lays out the criteria that inform the choice.

Here a clear editorial difference emerges. Focusing on the keyword means covering a topic; working on the prompt means entering the logic through which that topic is explored. This shift in perspective also significantly impacts the final content, as it shifts the focus from coverage to actual relevance.

The Fan-Out Paradox

However, there is a logical leap between the moment the user writes a request and the moment they receive a summarized response.

During this interval, systems like Gemini perform a phase of reasoning and Query Fan-out, breaking down the initial prompt into a range of parallel searches aimed at covering the various informational angles of the topic. AI does not search for an exact keyword, but breaks down the intent to find scientific evidence, opposing views, technical comparisons, and practical guides.

Visibility then takes on a different nature. If your content is optimized exclusively for the “parent query,” you risk being left out of an important part of the validation process. Today’s strategic work requires covering the informational branches that the algorithm generates in the background and deems necessary to build a robust response—and no, it’s not enough to include every variation of long-tail keywords you can find.

AI Prompt Research works where the query expands into a need

It is to enable you to intervene in this space that we designed AI Prompt Research, which takes a prompt and breaks it down into a readable structure that makes explicit what is still implicit in the question. It brings to light which areas underpin the need, which questions articulate it, which criteria influence the decision, and which content can address the topic with greater precision.

The most interesting aspect lies in the hierarchy that emerges. A prompt can open up a much broader field of information than the initial query and, at the same time, precisely guide the type of content to be created. At that moment, the tool ceases to appear as a semantic expansion and becomes an editorial map of the need.

What the tool does and how it works

Prompt Research starts with a question formulated as a user would write it in an AI engine and transforms it into a readable structure. It brings order to a complex question and breaks it down into blocks that correspond to the actual stages of the need. That’s why the point isn’t “how many keywords it generates,” but how it organizes the question.

The first useful level is the breakdown by domains. The tool breaks down the prompt into its key domains—Informational, Evaluation and Comparison, Trust and Reliability, Transactional, and Follow-up—which immediately shows you that the initial question doesn’t exist in just one space. Even when the prompt seems simple, the need almost always extends in multiple directions. There is the informational part, the comparative part, the request for confirmation, the moment of choice, and then what comes after.

The interpretation of the topic changes accordingly. A question about a city to visit in April may seem purely informational but can instead open up topics like climate, costs, crowds, logistics, activities, safety, and handling unexpected events. A question about a product can lead to considerations of accessories, reliability, maintenance, reviews, and usage issues.

Questions reveal the hidden structure of the search

Within each domain, the tool collects the questions that truly underpin the need, making the user’s thought process visible. The initial query remains the entry point, but the real work begins when you see how it unfolds—when evaluation criteria, hesitations, points of friction, and objections that block the choice emerge, along with needs that surface immediately afterward.

This also yields a rather clear editorial lesson. Useful content doesn’t just cover the main topic: it holds up better when it anticipates the questions that follow the first one. Prompt Research brings this depth to the surface and helps you avoid two common mistakes: writing articles that are too broad or creating pages that are too sparse compared to the actual complexity of the search.

On a practical level, for each block you’ll also find specific content creation tips that shift the focus to angle, focus, structure, elements to include, supporting content, comparisons, checklists, and useful formats.

This way, you move beyond the logic of brainstorming: you don’t find vague ideas, but an editorial priority. You know what content to create, how to make it more useful in relation to the expressed need, and which parts to develop to truly capture the search sequence.

The value lies not in volume, but in hierarchy

The starting point of every analysis in AI Prompt Research is the contextual summary of the user’s need.

Before the numbers, before the clusters, before the list of questions, there is an interpretation of the search intent that guides everything else. The number of keywords may be impressive, but on its own it says little. The strength of Prompt Research lies in the hierarchy it reveals. It allows you to distinguish the main topic from its key points, informational passages from comparative ones, requests for reassurance from purchase intentions, and immediate needs from those that come later.

In the case of uncertainty about sunscreen for children with sensitive skin, the tool immediately identifies a key factor: the priority isn’t cost, but dermatological safety, ingredient transparency, and the ability to choose without exposing the child to a perceived high risk.

This way, you start with the right tone, building your content on the pillars of trust that the query truly demands. When the need involves protection, reliability, certifications, and authoritative opinions, the response cannot be limited to a generic explanation or a list of products. The search intent summary clarifies what type of content the AI engine will tend to consider more robust as it reconstructs the reasoning: your text no longer stems from a simple expansion of the topic, but from the actual form of the query.

Reconstructing the path leading to the choice

With Prompt Research, you can more precisely uncover the hidden trajectory of a prompt. The user almost never enters an AI engine with a fixed, linear need. They start with a request, then go through comparison, verification, doubt, decision, and subsequent issues; their initial question may seem straightforward, but it already contains the subsequent steps within it.

The prompt contains the initial information, followed by selection criteria, a request for reassurance, a comparison of alternatives, a cost assessment, the fear of making a mistake, and finally the doubts that emerge after purchase or use. In practice, the search doesn’t stop the moment the user formulates the first sentence. It begins there.

If you continue to treat the question as a single block, you risk creating incomplete content. You cover the topic, but you leave out the steps that truly drive the decision.

A list of related keywords can broaden your coverage, but it’s not enough to help you understand where the question begins. The clusters in Prompt Research offer a different value because they organize the problem into functional blocks. The informational part doesn’t carry the same weight as the comparison. The request for trust doesn’t serve the same function as the transactional part. The Follow-up isn’t a decorative addition, but a signal that the search continues beyond the first choice.

The advantage lies precisely here. The tool forces you to establish a content hierarchy. It leads you to distinguish what introduces the topic from what resolves a doubt, what reassures from what guides the decision, what responds immediately from what prepares the next steps. It is a more useful step than a simple semantic expansion, because it shifts the focus from quantity to structure.

Designing Answer Engine-Proof Content

The report organizes search needs into logical categories that cover the entire journey of the user’s need: Informational, Evaluation, Trust, Transactional, Follow-up. Each category not only clarifies what the AI will look for but also how to structure articles, guides, and supporting content to be most useful during the source selection phase.

This is how your page changes: you no longer write generic text that “covers the topic,” but rather build information nodes that address specific operational needs. In a search for a city to visit in Italy in April, for example, the AI doesn’t stop at monuments or the most obvious destinations. It also considers climate, luggage, managing the month, local events, cost, and crowds. Similarly, in a purchase-related query, the system looks for benefits, limitations, practical conditions of use, comparisons, and reassurance.

The most useful content, therefore, is not the one that adds a lot of information all at once. It is the one that systematically distributes answers to the different stages of the query.

One of the most interesting aspects of the tool lies in its ability to bring out the conversational phrasing that makes up the breakdown of the need. When AI prepares a response on how to choose a product, it doesn’t just revolve around the main query. It extends to more specific, more technical, and more context-specific questions. It is from this network of sub-queries that an important part of the reasoning takes shape.

Translating these branches into sections, paragraphs, or dedicated content increases the likelihood of addressing the points where the machine seeks confirmation, clarification, or proof of reliability. It is not a matter of chasing isolated strings, but of building a coherent coverage of the formulations that articulate the need. Every answer given to a sub-query in the fan-out adds a possible access point for content selection.

The Follow-up brings out the “after,” which is often the most neglected part

In the Follow-up block, the prompt reveals its full depth. After the choice, the need continues: maintenance, practical issues, proper use, unexpected events, durability, support, adaptation. In many cases, this is precisely the material that distinguishes adequate content from truly useful content.

The point is simple. An AI engine doesn’t just intercept those who want an immediate answer. It also intercepts those who have already chosen and want to understand how to use, how to maintain, and how to avoid mistakes. Prompt Research makes this long tail of need visible and gives you a clear editorial advantage: it allows you to stay on top of the topic even after the decision-making moment—that is, at the point where much content stops.

Three prompts, three different ways to interpret a complex question

To highlight the value of Prompt Research, we tested the tool on three requests that were very different in theme, tone, and intent. This way, you can see how the nature of the need changes when the prompt stems from exploratory research, a purchasing choice, or a decision requiring trust and reassurance. This is the key point: the prompt doesn’t simply extend the query, but changes the type of content that should be designed.

Every question carries a different structure, and the tool gains value precisely because it manages to reflect it without flattening it.

The first prompt, Which city to visit in Italy in April?, opens an exploratory search that appears informational yet already involves planning, comparison, and decision-making. The second, Which cordless vacuum cleaner is best to buy for a home with pets?, addresses a high-intent query, where the usage context immediately dictates technical criteria, comparison, and practical evaluation. The third, How to choose a sunscreen for children with sensitive skin?, brings together information, protection, trust, and the fear of making a mistake. It is a more delicate structure, because the choice also depends on reassurance, safety, and authority.

- The travel prompt opens up informational content that is already close to a decision

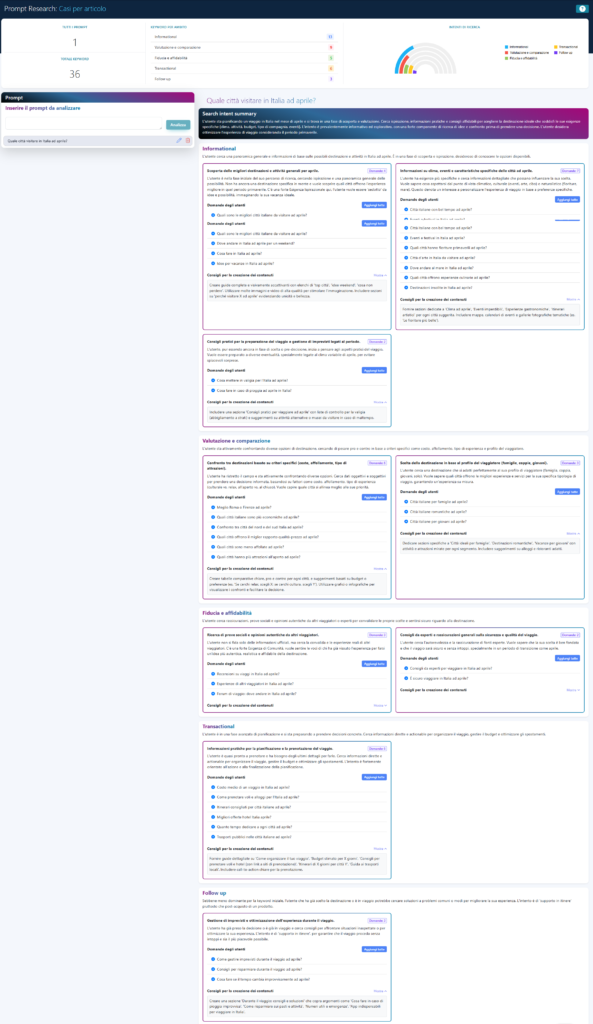

The travel prompt starts with a seemingly broad request: Which city to visit in Italy in April? The tool identifies 36 keywords and distributes them across a field dominated by informational content, but the most interesting aspect is something else. The question doesn’t remain within the realm of mere curiosity. It immediately branches out in multiple directions: weather, activities, city characteristics, cost of stay, crowds, practical advice, safety, and travel preparation.

Here, the value of clusters becomes clear. The city to visit isn’t just a destination to name; it becomes a matter of choice. Useful content, therefore, isn’t just a list of recommended cities. It requires comparisons, different scenarios, decision-making criteria, and practical guidance. The content creation tips in the expanded screen go precisely in this direction: they don’t just suggest a broad article, but push toward an organized guide, with sections that truly help you decide.

It’s a useful case because it challenges a widespread habit. An informational search, read only superficially, often leads to generic content. Prompt Research, on the other hand, clarifies that the informational part is already the first step in a decision.

- The vacuum cleaner illustrates how the context of use shapes the entire research

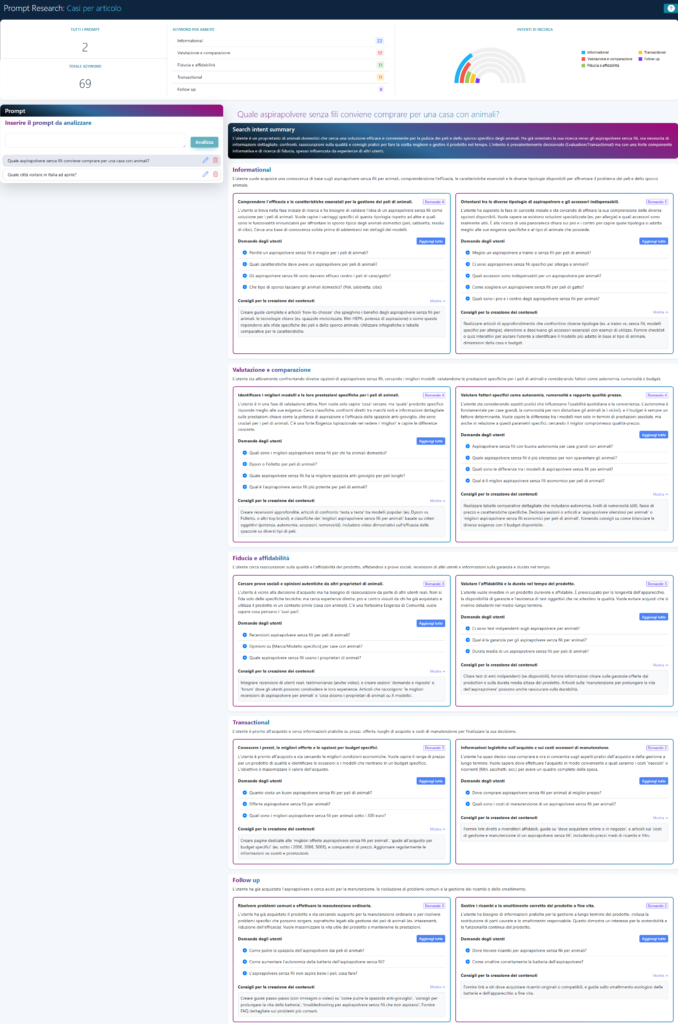

The prompt Which cordless vacuum cleaner is best to buy for a home with pets? is a very clear example of a high-intent question. In the project, the tool returns 69 keywords and a summary with a very strong evaluative component. The decisive detail, however, lies in the usage context already embedded in the question: home with pets.

This changes everything. The search doesn’t revolve solely around the product, but around the conditions in which that product must function. Themes such as pet hair, essential accessories, actual power, battery life, noise level, maintenance, reliability, costs, and ongoing management immediately emerge from the clusters. The need is already specific, and the comparison isn’t an afterthought. It’s written right into the prompt.

The case also clearly illustrates another point. A single prompt opens up a very wide range of sub-questions, forcing the content to address not only the core need but also its technical ramifications. In this sense, the report makes an important part of the fan-out clear: the initial question breaks down into a network of parallel checks that the machine can use to construct a more robust response.

The opposite risk would be to treat the search as a generic buying guide, whereas the content must be built around the criteria that matter in that specific context. The report encourages reading the question as a practical and comparative problem, not as an extended review. And the Follow-up section further extends the reasoning: battery, cleaning, disposal, lifespan, usage issues. The content, therefore, does not stop at “which one is worth buying,” but already addresses what comes next.

- Sunscreen makes the weight of trust and reassurance visible

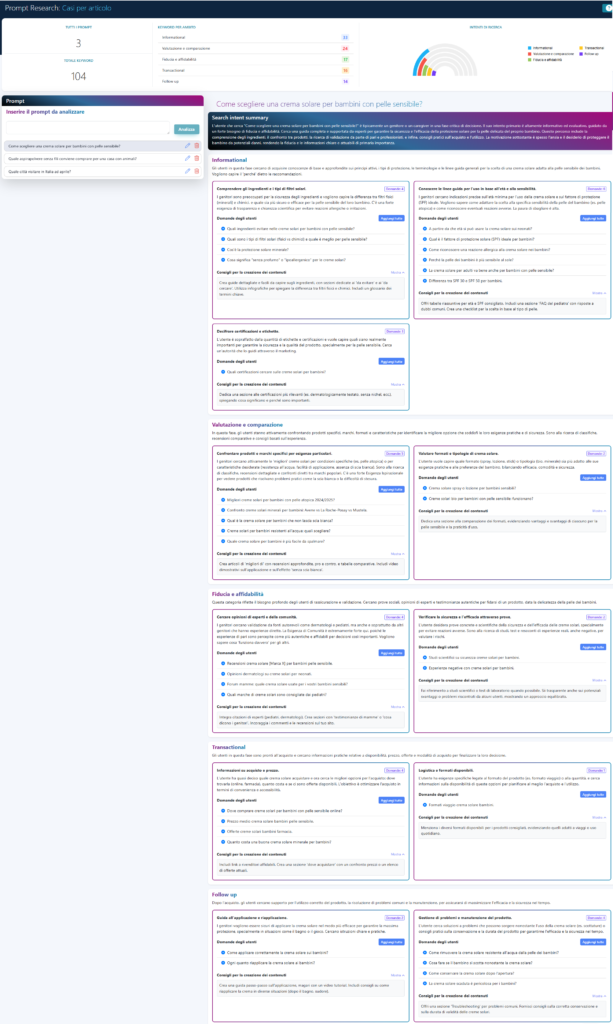

The third prompt, How to choose a sunscreen for children with sensitive skin?, best illustrates the tool’s depth in terms of editorial content. In this project, the tool generates 104 keywords and a highly detailed structure, in which informational content is combined with comparisons, reliability checks, selection guidance, and instructions for proper use.

Here, the need isn’t just about the product. It’s about the possibility of making a mistake. Ingredients, filters, SPF, labels, certifications, dermatologists, reviews, safety, longevity, application methods: the question combines information and fear. It’s a very useful example because it clarifies a point that remains implicit in much content. The user isn’t just looking for an explanation. They’re looking for reassurance, confirmation, and risk reduction.

The analysis brings this structure to the surface with great clarity. The clusters on Trust and reliability are not an afterthought. They are the core of the need. And the content recommendations clearly show that the most useful content isn’t the one that lists products neutrally, but the one that organizes safety criteria, explains how to truly evaluate a choice, and guides the user through a delicate decision.

The decomposition of the prompt into the Fan-out spectrum

Taken together, these three prompts clearly show that Prompt Research does not work by adding ideas. It works by decomposing the need.

In the case of travel, informational research immediately expands into planning and decision-making. In the case of the vacuum cleaner, the context of use immediately structures the comparison criteria. In the case of sunscreen, the need is divided between choice, protection, and reassurance. This is where the tool becomes truly interesting: it returns the editorial form of the question even before the content. And this is a significant difference, especially when you want to design content that more closely mirrors how users today query AI engines.

Managing the Query Fan-out requires a paradigm shift in editorial planning. Each branch identified by the tool represents a parallel search that the machine performs to reduce the uncertainty of its response. If the content does not meet these technical micro-requirements, the brand loses the chance to be selected as a primary source. Appearing in AI summaries therefore means addressing the informational nodes that truly underpin the machine’s reasoning, transforming the article into a structure of coherent, verifiable, and well-distributed answers.

When to Actually Use Prompt Research

The right time to use Prompt Research is before writing, at the point where you need to understand what you’re actually going to cover. A prompt may seem clear but, in reality, contain a need far more layered than the initial phrase suggests. Here, the tool becomes useful not because it multiplies ideas, but becauseit reduces ambiguity. It forces you to read the question in its full form and choose more precisely what to build.

- Before writing content that starts from a complex question

There are requests that seem well-defined but actually open up very different fields. Travel, shopping, health, comparison, practical use: in many cases, the difficulty lies not in finding the topic, but in understanding which part of the need truly deserves to be the focus of the content. Prompt Research distinguishes what introduces the question from what makes it useful, what guides the choice from what reassures, what answers immediately from what prepares the next steps.

This changes the quality of editorial work. Content built solely on intuition risks remaining broad or generic. Content designed based on the prompt’s structure starts with a better hierarchy.

- When you want to understand what the user is really asking

One of the tool’s strongest features lies in its ability to clarify the real need underlying the question. The user writes a sentence, but within that sentence, multiple layers are at play. A travel-related prompt already encompasses costs, weather, safety, activities, and preparation. A product-related prompt opens up performance, reliability, maintenance, objections, and future use. A prompt about child safety involves information, fear, safety checks, and the need for confirmation.

This depth, when properly interpreted, takes the content beyond the surface of the topic. It’s the point where you stop writing “about a topic” and start building a response that truly follows the logic of the question.

- When you want to design content that aligns more closely with AI engines

There’s another very practical use for the tool. AI engines work increasingly well with complex questions, context, follow-ups, and natural language requests. Prompt Research allows you to design content that aligns more closely with this form of search. Not because it mimics the machine, but because it takes seriously the way users formulate their needs today.

This step also impacts editorial strategy. The content that performs best in AI engines isn’t just the content with the most information. It’s the content that systematically addresses the core elements of the query: comparison, trust, choice, support, and in-depth analysis. The tool clarifies precisely this architecture.

- When content must also address the “after”

The Follow-up section clarifies a point that is often underestimated: the search doesn’t end with the main answer. After the choice come usage, maintenance, practical issues, long-term management, unforeseen events, and secondary doubts. This material, if considered in advance, changes the content you design.

It is one of the reasons why Prompt Research adds value even beyond the single page. It allows you to think in terms of an editorial ecosystem. Introductory content can introduce the topic, a comparison can guide the decision, and a practical guide can support subsequent use. The tool doesn’t just generate ideas. It maps out a path.

Prompt Research adds a new layer to editorial research

Editorial research has traditionally relied primarily on keywords, volumes, semantic clusters, and related queries. All of this remains useful. Prompt Research, however, adds a different layer: it takes the actual form of the question and transforms it into a map of the need. This is where the work truly changes. You no longer start solely from the topic to cover. You start from the way the user approaches it.

The keyword remains a metric. The prompt becomes a structure. That is the entire difference. A keyword captures a topic. A prompt reconstructs the problem the user is trying to solve, the steps involved, the criteria guiding the choice, and the questions that arise immediately afterward.

It is a shift in perspective within content strategy. The point is not just to cover the topic. What matters is understanding where the need truly arises, which clusters underpin it, and what sequence of content makes the most sense to build.

This is the distinction worth holding onto most firmly. Prompt Research is not a generator of ideas to be used haphazardly. It has value because it organizes. It separates the domains, distributes the intents, brings out the hierarchy of questions, and translates the analysis into an editorial direction. The result isn’t a longer list. It’s a more useful structure.

For this reason, in daily work, the tool becomes most valuable during phases where you need to reduce ambiguity, bring order to research, avoid overly broad content, and create materials that more closely match the actual form of the query.

Useful content starts with the form of the query

Ultimately, the point is simple. Users no longer always search in short-form queries. They explain, clarify, add context, and bring in doubts and criteria. AI engines read this expanded form with ever-greater precision. Our tool is valuable precisely because it takes that form seriously and translates it into an editorial structure.

Travel, the vacuum cleaner, and sunscreen seem like three unrelated topics. In reality, they all point to the same thing. Every prompt contains much more than its initial wording. Inside lies a need that expands, branches out, organizes itself into phases, and requires different content to be properly addressed.

Prompt Research works precisely there. It doesn’t simply add words to a topic. It reconstructs the path leading from the question to the choice, and from the content to its real utility. The tool’s most concrete advantage lies here: it shifts your focus from the keyword as a snapshot to the question as an architecture.